This is the multi-page printable view of this section. Click here to print.

Elastx Documentation

- 1: AI and Other Services

- 1.1: Announcements

- 2: Cloud Connect

- 3: Data Security Manager (beta)

- 4: DBaaS

- 4.1: Announcements

- 4.2: DBaaS Getting Started

- 4.3: FAQ

- 4.4: Managed Service

- 4.5: Overview

- 4.6: Reference

- 4.6.1: Datastore Statuses

- 4.6.2: Glossary

- 4.6.3: Notifications

- 4.6.4: Observability

- 4.6.4.1: Metrics

- 4.6.4.1.1: Introduction

- 4.6.4.1.2: MySQL And MariaDB

- 4.6.4.1.3: PostgreSQL

- 4.6.4.1.4: Redis

- 4.6.4.1.5: System

- 4.6.4.1.6: Valkey

- 4.6.5: Products

- 4.6.5.1: MariaDb

- 4.6.5.1.1: Backup

- 4.6.5.1.2: Configuration

- 4.6.5.1.3: Importing Data

- 4.6.5.1.4: Limitations

- 4.6.5.1.5: Overview

- 4.6.5.1.6: Restore

- 4.6.5.1.7: TLS Connection

- 4.6.5.2: MSSQLServer

- 4.6.5.2.1: Configurations

- 4.6.5.2.2: Limitations

- 4.6.5.2.3: Overview

- 4.6.5.2.4: User Management

- 4.6.5.3: MySQL

- 4.6.5.3.1: Backup

- 4.6.5.3.2: Configuration

- 4.6.5.3.3: Importing Data

- 4.6.5.3.4: Importing Data From AWS RDS

- 4.6.5.3.5: Importing Data From GCP

- 4.6.5.3.6: Limitations

- 4.6.5.3.7: Overview

- 4.6.5.3.8: Restore

- 4.6.5.3.9: TLS Connection

- 4.6.5.3.10: User Management

- 4.6.5.4: PostgreSQL

- 4.6.5.4.1: Backup

- 4.6.5.4.2: Configuration

- 4.6.5.4.3: Extensions

- 4.6.5.4.4: Importing Data

- 4.6.5.4.5: Limitations

- 4.6.5.4.6: Restore

- 4.6.5.5: Redis

- 4.6.5.5.1: Backup

- 4.6.5.5.2: Configuration

- 4.6.5.5.3: User Management

- 4.6.5.6: Valkey

- 4.6.5.6.1: Backup

- 4.6.5.6.2: Configuration

- 4.6.5.6.3: User Management

- 4.6.6: Supported Databases

- 4.7: Changelog

- 4.8: DBaaS Guides

- 4.8.1: Backup and Restore via CLI

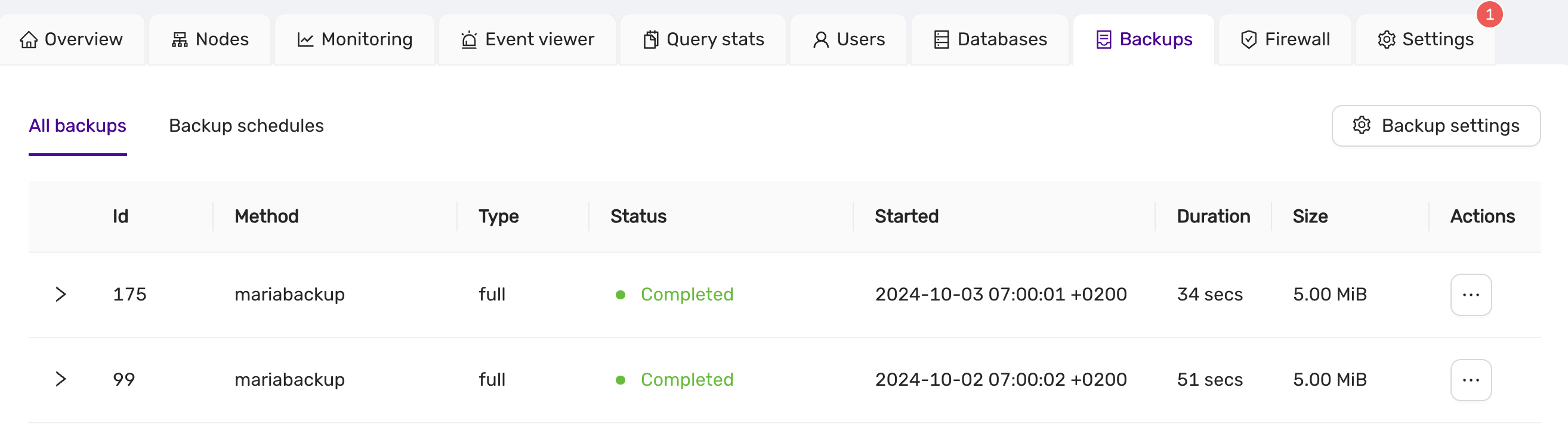

- 4.8.2: Backup and Restore via DBaaS UI

- 4.8.3: Config Management

- 4.8.4: Create Datastore From Backup

- 4.8.5: Database Db Management

- 4.8.6: Database User Management

- 4.8.7: Datastore Settings

- 4.8.8: DBaaS with Terraform

- 4.8.9: Deploy A Datastore

- 4.8.10: Event Viewer

- 4.8.11: Firewall

- 4.8.12: Logs Viewer

- 4.8.13: Observability

- 4.8.14: Parameter Group

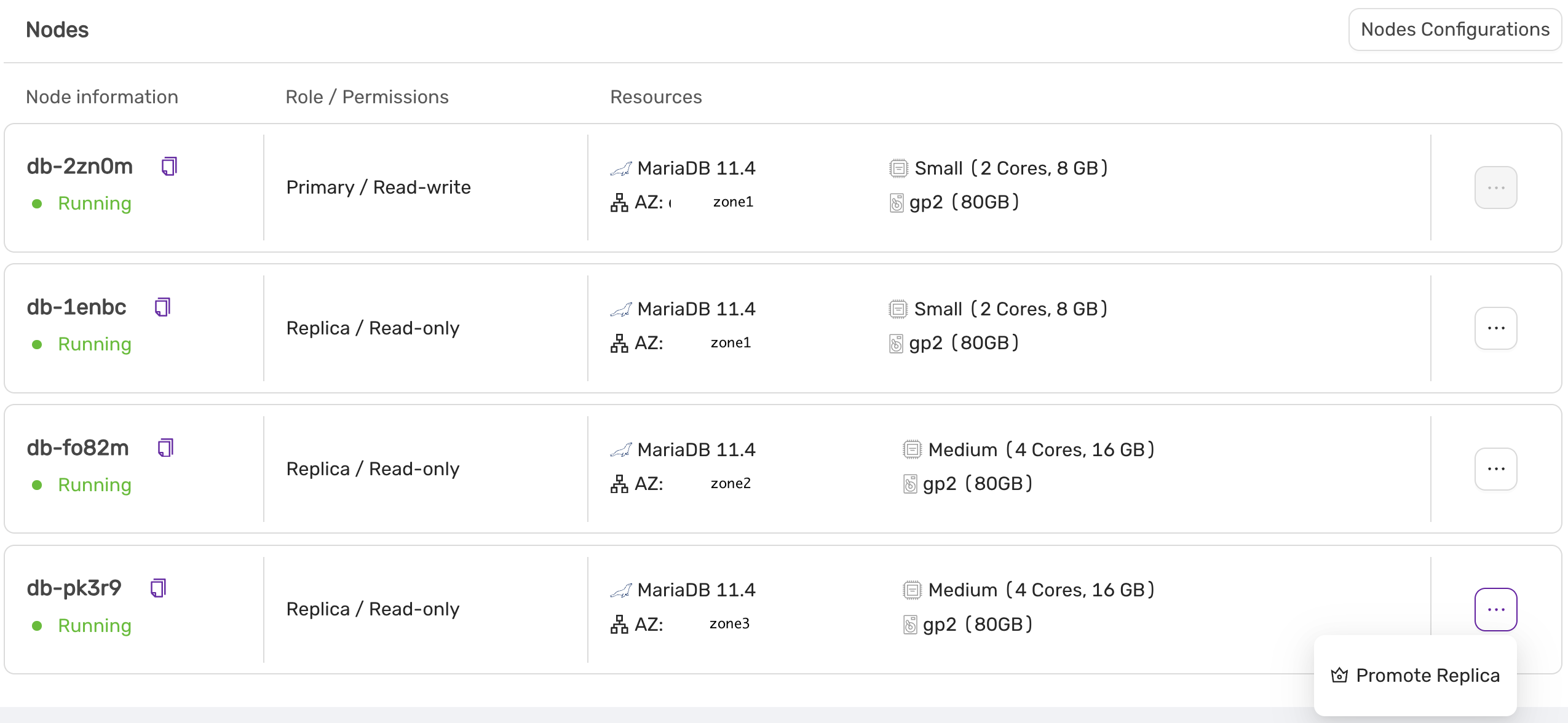

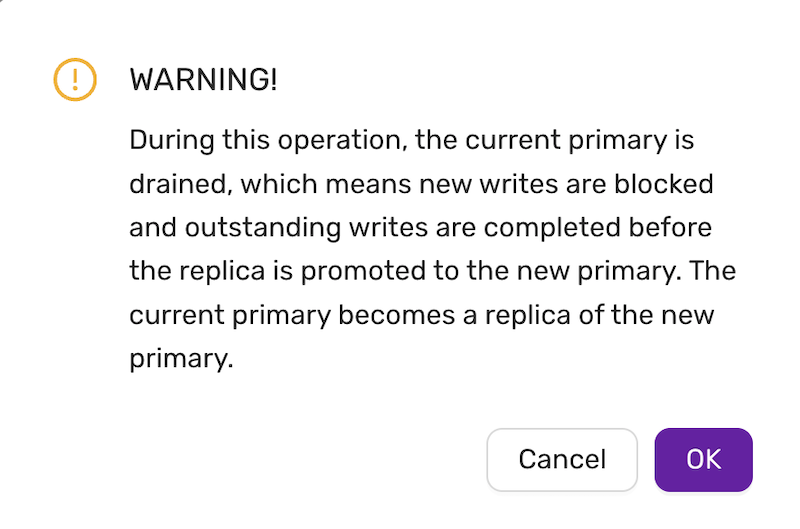

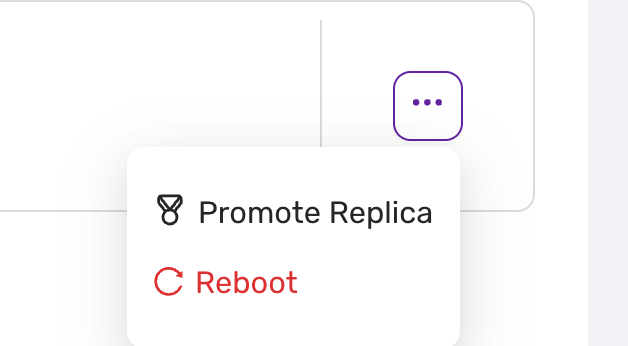

- 4.8.15: Promote A Replica

- 4.8.16: Reboot A Node

- 4.8.17: Restore Backup

- 4.8.18: Scale A Datastore

- 4.8.19: Terraform Provider

- 4.8.20: TLS For Metrics

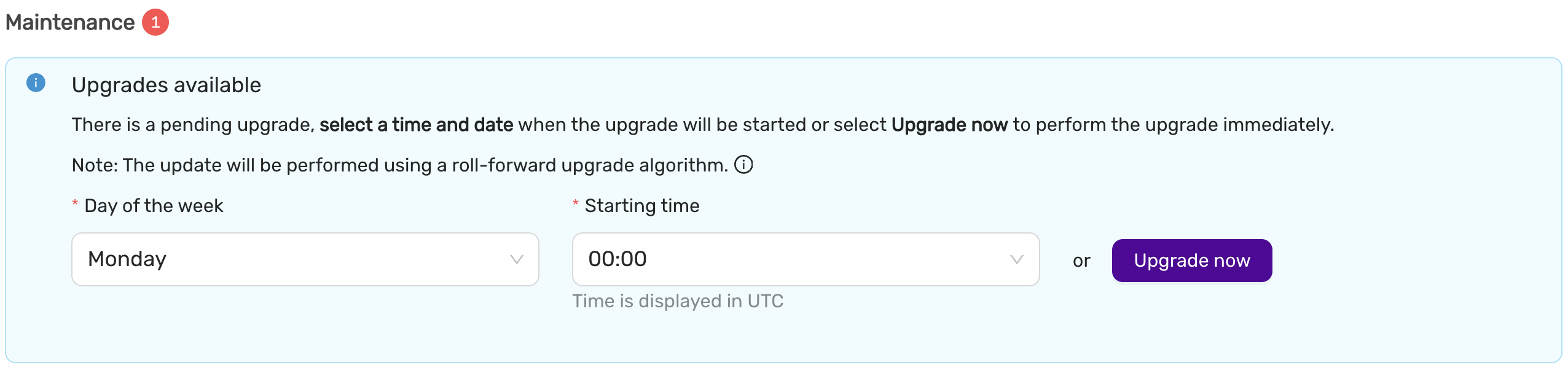

- 4.8.21: Upgrade Lifecycle Mgmt

- 4.8.22: Connect Kubernetes with DBaaS

- 5: Elastx Cloud Platform API (beta)

- 5.1: ⚡ Quick Start Guide

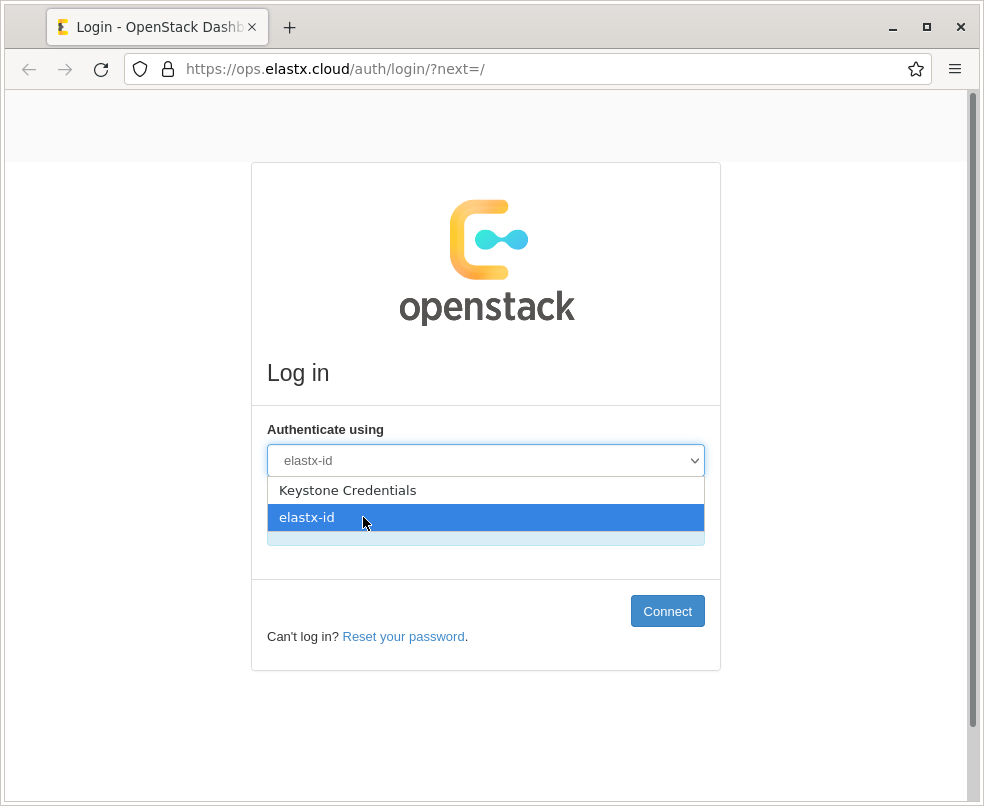

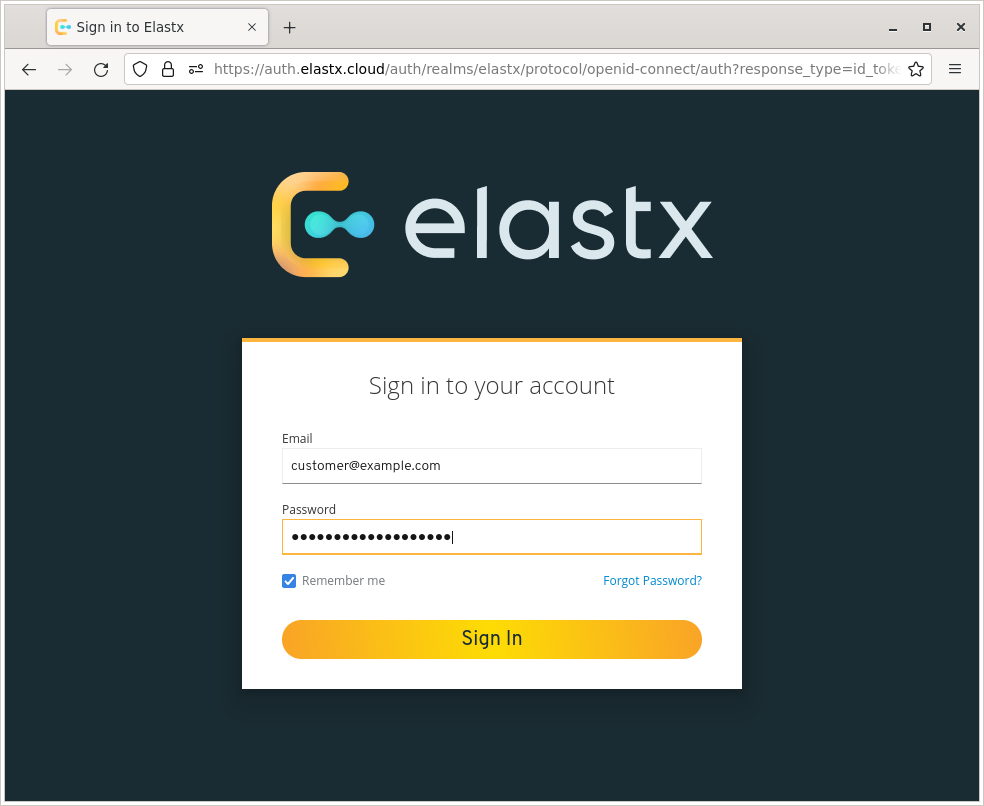

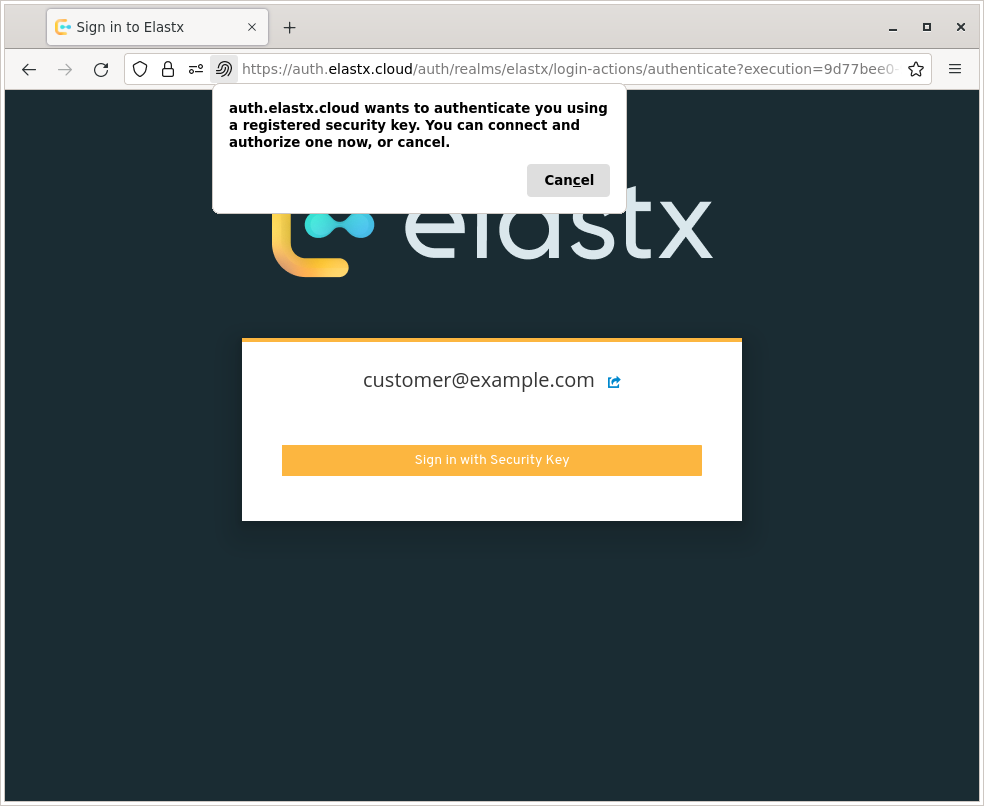

- 6: Elastx Identity Provider

- 6.1: Overview

- 7: Kubernetes CaaS

- 7.1: Announcements

- 7.2: Overview

- 7.3: Getting started

- 7.3.1: Accessing your cluster

- 7.3.2: Auto Healing

- 7.3.3: Auto Scaling

- 7.3.4: Cluster configuration

- 7.3.5: Cluster upgrades

- 7.3.6: Kubernetes API whitelist

- 7.3.7: Order a new cluster

- 7.3.8: Recommendations

- 7.4: Guides

- 7.4.1: Cert-manager and Cloudflare demo

- 7.4.2: Change PV StorageClass

- 7.4.3: Ingress and cert-manager

- 7.4.4: Install and upgrade cert-manager

- 7.4.5: Install and upgrade ingress-nginx

- 7.4.6: Load balancers

- 7.4.7: Migration to Kubernetes CaaS v2

- 7.4.8: Persistent volumes

- 7.4.9: Your first deployment

- 7.5: Changelog

- 7.5.1: Changelog for Kubernetes 1.34

- 7.5.2: Changelog for Kubernetes 1.33

- 7.5.3: Changelog for Kubernetes 1.32

- 7.5.4: Changelog for Kubernetes 1.31

- 7.5.5: Changelog for Kubernetes 1.30

- 7.5.6: Changelog for Kubernetes 1.29

- 7.5.7: Changelog for Kubernetes 1.28

- 7.5.8: Changelog for Kubernetes 1.27

- 7.5.9: Changelog for Kubernetes 1.26

- 7.5.10: Changelog for Kubernetes 1.25

- 7.5.11: Changelog for Kubernetes 1.24

- 7.5.12: Changelog for Kubernetes 1.23

- 7.5.13: Changelog for Kubernetes 1.22

- 7.5.14: Changelog for Kubernetes 1.21

- 7.5.15: Changelog for Kubernetes 1.20

- 7.5.16: Changelog for Kubernetes 1.19

- 7.5.17: Changelog for Kubernetes 1.18

- 7.5.18: Changelog for Kubernetes 1.17

- 8: Mail Relay

- 8.1: Overview

- 8.2: Announcements

- 8.3: Email and DNS

- 8.4: FAQ

- 8.5: Getting started

- 9: OpenStack IaaS

- 9.1: Announcements

- 9.2: Changelog

- 9.2.1: Changelog for OpenStack Train

- 9.2.2: Changelog for OpenStack Ussuri

- 9.2.3: Changelog for OpenStack Wallaby

- 9.2.4: Changelog for OpenStack Yoga

- 9.3: Overview

- 9.4: Network

- 9.5: Guides

- 9.5.1: Adjutant

- 9.5.2: Affinity Policy

- 9.5.3: API access

- 9.5.4: Application credentials

- 9.5.5: Application credentials - Access Rules

- 9.5.6: Barbican

- 9.5.7: Billing

- 9.5.8: Detach & Attach interface on a Ubuntu instance

- 9.5.9: EC2 Credentials

- 9.5.10: Getting started with OpenStack

- 9.5.11: Octavia

- 9.5.12: Swift getting started

- 9.5.13: Swift projects

- 9.5.14: Swift S3 compatibility

- 9.5.15: Terraform Backend

- 9.5.16: Volume Attachment Limits

- 9.5.17: Volume Backup & Restore

- 9.5.18: Volume migration

- 9.5.19: Volume Retype

- 9.5.20: Windows volume offline after restart

- 10: Tech previews

- 10.1: Elastx Cloud Console

- 10.1.1: Introduction

- 10.1.2: Access and permissions

- 10.1.3: Announcements

- 10.1.4: Features

- 10.1.5: Onboarding

- 10.2: The Vault

- 10.2.1: Knowledge base

- 10.2.1.1: FAQ

- 10.2.2: Backup solutions

- 11: Varnish CDN

- 12: Virtuozzo PaaS

- 12.1: Announcements

- 12.2: Guides

- 12.2.1: Catch-all VirtualHost with 301 redirect on Apache

- 12.2.2: Change public IP without downtime

- 12.2.3: Copy a SQL database

- 12.2.4: Copy files between environments

- 12.2.5: Enable IPv6

- 12.2.6: Enable X11-Forwarding on VPS

- 12.2.7: FAQ

- 12.2.8: Force HTTPS on Apache behind Nginx load balancer

- 12.2.9: Force HTTPS with Tomcat

- 12.2.10: Log real client IP behind a proxy

- 12.2.11: Nginx LB HTTP to HTTPS redirect

- 12.2.12: Nginx redirect to HTTPS

- 12.2.13: Node.JS NPM Module Problems

- 12.2.14: PHP max upload file size

- 12.2.15: Redirect nginx

- 12.2.16: Restrict phpMyAdmin access

- 12.2.17: SMTP on port 25 not working

1 - AI and Other Services

1.1 - Announcements

2024-06-04 AI services now available

Starting with AI to create business value can seem like a complex process. To help our customers to get started with AI we have launched a few different AI introduction services that offer a structured and proven approach to get started. They allow each business to choose which steps to implement and when, based on their unique situation and needs.

The following AI services are now available.

GPU and Private Language Model

Our Private Language Model as a Service is designed to provide you with a secure, cost-effective way to test and verify our future generative AI services within your system.

AI Introduction

AI Introduction is a collaborative starter package by Algorithma and Elastx, designed to efficiently and swiftly kickstart your AI journey.

Responsible AI Training

Join us at our location or invite us to yours for an in-depth session on information security and responsible AI.

GPU and Private Language Model

Our Private Language Model as a Service is designed to provide you with a secure, cost-effective way to test and verify our future generative AI services within your system.

Customized AI Projects

In collaboration with you, Algorithma and Elastx plan and execute tailored AI projects.

You can find information, specifications and pricing here, https://elastx.se/en/ai-services/.

If you have any general questions or would like to sign-up please contact us at hello@elastx.se. For technical questions please register a support ticket at https://support.elastx.se.

2024-02-01 DDoS protection now included

We are happy to announce that we are now adding our DDoS protection to all Elastx Cloud Platform customers for free. This is yet another step in our mission to provide the best cloud platform for business critical services with sensitive data. Your services will now be protected from L3/L4 volumetric DDoS attacks by our inline protection.

If you already are an Elastx customer you do not need to do anything, the DDoS protection service will be enabled automatically. If you are currently subscribing to our DDoS protection service, your subscription will be updated accordingly.

This will apply from 2024-02-01.

L4 proxy and Geo Fencing

We have also added an anycast L4 proxy service with an optional Geo Fencing function.

You can find information, specifications and pricing here, https://elastx.se/en/ddos-protection.

If you have any general questions or would like to sign-up please contact us at hello@elastx.se.

Any technical questions please register a support ticket at https://support.elastx.se.

2023-05-02 Cloud Colocation

We are happy to announce these platform news that will help you to run applications on Elastx Cloud Platform with enhanced security.

Cloud Colocation

Cloud Colocation is a service that enables customers to host their own hardware in the same data centers as the Elastx platform. This could, for example, be hardware security modules (HSM) for storing and managing secrets if required to manage that on your own.

Customers can acquire individual rack units or a separate section in a rack and will receive a dedicated, fast, and private network connection to connect to the desired network in the Elastx platform via Cloud Connect. Customers can also interconnect with other cloud platforms using Elastx Cloud Exchange.

Physical access to the space is provided, and on-site staff is available to offer assistance if needed. Mounting equipment, including cables and rack screws, is included.

Cloud Colocation has been available since 2023-03-04

2023-01-27 Elastx Cloud Platform pricing adjustment

To Elastx Customers and Partners,

We are trying to avoid a general price increase on all services, even though the current high inflation is affecting us hard. We are investing in new more efficient technology to compensate for the increased cost derived from product vendors, utility services, financial service and internal costs. We have identified a few selected services where we need to perform price adjustments to be able to continue the development in a sustainable way.

The new pricing will apply from 2023-03-01.

We will adjust the pricing on Professional Services and also reduce the number of different types to a minimum to make it easier.

| Service | Current price | New price |

|---|---|---|

| Professional Services CloudOps Engineer | 1150 SEK / Month | 1500 SEK / Month |

| Professional services CloudOps Engineer non office hours | 2300 SEK / Month | 3000 SEK / Month |

We will adjust the pricing on Application Monitoring in order to be able to keep the high service levels and continue the development of the service.

| Service | Current price | New price |

|---|---|---|

| Application Monitoring Basic | 495 SEK / Month | 2900 SEK / Month |

| Application Monitoring Advanced | 7900 SEK / Month | 14900 SEK / Month |

2 - Cloud Connect

General

Cloud Connect is a service for customers to make a physical connection to Elastx public cloud.

Locations

The service is offered in our availability zones:

- Elastx STO1

- Elastx STO2

- Elastx STO3

Customers can also connect at “Private Peering Facilities” locations on PeeringDB.

Media

The service only allows fiber-based connections. The following physical media is supported. Customer needs to specify which media they want to connect with.

10G ports:

- 1000BASE-LX (1310 nm)

- 10GBASE-LR (1310 nm)

100G ports:

- 100GBASE-LR4 (1310 nm)

Data Plane Protocols

- MTU 1500 is default. This can be increased to MTU 9000.

- VLAN encapsulation (IEEE 802.1Q) is recommended. All (1-4093) VLAN numbers are available.

- Link Aggregation Control Protocol (IEEE 802.3ad) is supported.

Control Plane Procotols

- Border Gateway Protocol (BGP) is recommended.

Border Gateway Protocol (BGP)

Elastx currently supports IPv4 Address Family (AFI 1) Unicast (SAFI 1).

Private peering

Customer can advertise any network, including 0/0 for default routing. Elastx needs to informed of the networks prior to advertisement.

Any AS numbers within 64512–65534, 4200000000–4294967294 (RFC 6996) can be used. With the exception of reservation 4258252000-4258252999.

Public AS numbers can be approved after ownership verification.

Public peering

Customer can only advertise networks assigned by Elastx. AS numbers are assigned by Elastx.

Public AS numbers can be approved after ownership verification.

3 - Data Security Manager (beta)

3.1 - File System Encryption for Linux

File System Encryption for Linux

This is a quick setup guide for File System Encryption (FSE) using Elastx Data Security Manager.

- Log in to Elastx DSM https://hsm.elastx..cloud and enter the account you want to use.

- Create a Group and set a name. A group can contain multiple secrets but if you want to use quorum approvals you can only manage one FSE per group.

- Create an app and set a name.

- Select the default API Key and the authentication method.

- Assigning the app to the group you just created.

- Get the API Key, select the app you created, under Info > API Key, press the “VIEW API KEY DETAILS” button.

- Log in to the Linux machine where you want to encrypt data. (These instructions are made for Ubuntu 24.04)

- Install fuse.

sudo apt install libfuse2 - Download and install the FSE agent.

wget https://download.fortanix.com/clients/FSE/1.10.147/fortanix-dsm-fseagent-1.10.147.deb

sudo apt install ./fortanix-dsm-fseagent-1.10.147.deb - Create a directory where the configuration and the encrypted files will be stored and a mount point

sudo mkdir /fse /data - Configure the file system

sudo fortanix-dsm-fseagent -dsm -init /fse- Enter the DSM Endpoint: https://hsm.elastx.cloud

- Enter the Api Key: <api key>

There is no text echo, paste the key and press enter.

- Mount the filesystem

sudo fortanix-dsm-fseagent --allow_other /fse /data- Enter the Api Key: <api key>

(twice)

- Enter the Api Key: <api key>

- Install fuse.

- If you want to automatically mount the filesystem at boot do the following.

- Add the API key to file

/etc/fse-auto-mount/api_keys/1.conf - Add the mount command to file

/etc/fse-auto-mount/mount_cmd/1.conf - Reload systemd to apply the changes

sudo systemctl daemon-reload - Enable the service

sudo systemctl enable fse-auto-mount@1.service

- Add the API key to file

- Done

You can find the full documentation here.

4 - DBaaS

4.1 - Announcements

2026-03-31 Elastx Cloud Platform - Compute, Storage and DBaaS

Pricing adjustment

We have absorbed rising operational costs rather than pass them on to you. Despite significant inflationary pressure across the industry, we have managed to keep our prices stable.

The primary driver for our price adjustment is a sharp increase in hardware costs, which have risen 150–250% in the last 6 months. This is a market-wide development entirely outside our control, and one we have worked hard to shield you from for as long as possible. We have reached a point where continuing to do so would compromise our ability to maintain and develop the platform in a sustainable way.

Effective July 1, we will apply a 10% price adjustment to all Compute, Storage and DBaaS services.

We do not take this decision lightly. It is the first time we have increased prices on most of these services, and it reflects the reality of today’s hardware market rather than any change in our commitment to you.

This change will take effect starting July 1, 2026.

Pricing adjustments

We will adjust the pricing on the following services.

| Service | Price increase |

|---|---|

| Compute | 10% |

| Block storage | 10% |

| Object Storage | 10% |

| DBaaS | 10% |

2024-10-14 MSSQL DBaaS now available

We are excited to announce that we now have Microsoft SQL Server available in Elastx Database as a Service.

Elastx DBaaS automatically ensures your databases are reliable, secure, and scalable so that your business continues to run without disruption. You can achieve high availability and disaster protection by configuring replication and backups to protect your data. Backups and multi-node datastores are disaster protected as they are running over multiple availability zones which in our case are geographically separated data centers. Automatic failover makes your database highly available.

The following services are included as standard in our prices: 24x7 support, Threat Intelligence, DDoS protection, encrypted traffic between our availability zones and data encryption at rest.

You can find detailed information, specifications and pricing here, https://elastx.se/en/mssql-dbaas.

If you have any general questions or would like to sign-up please contact us at hello@elastx.se. Any technical questions please register a support ticket at https://support.elastx.se.

2024-04-12 ECP DBaaS Generally Available

We are happy to announce that the following services are now generally available (GA) in Elastx Cloud Platform (ECP).

- MySQL DBaaS

- MariaDB DBaaS

- PostgreSQL DBaaS

- Redis DBaaS

The ECP DataBase as a Service gives you a fully managed database with the possibility to run your database in a high availability and disaster protected environment.

It has been available as a public tech-preview since november 2023. A number of updates and features have been added during this period and the service has now reached a maturity level where we can offer this service to all customers.

You can find information, specifications and pricing here, https://elastx.se/en/database. Service documentation is available here, https://docs.elastx.cloud/docs/dbaas/.

If you have any general questions please contact us at hello@elastx.se and you can sign-up for the service here, https://elastx.se/en/signup. Any technical questions please register a support ticket at https://support.elastx.se.

4.2 - DBaaS Getting Started

CCX is a comprehensive data management and storage solution that offers a range of features including flexible node configurations, scalable storage options, secure networking, and robust monitoring tools. It supports various deployment types to cater to different scalability and redundancy needs, alongside comprehensive management functions for users, databases, nodes, and firewalls. The CCX project provides a versatile platform for efficient data handling, security, and operational management, making it suitable for a wide array of applications and workloads.

Deployment Solutions

Our deployment solutions offer customizable configurations for various node types, designed to support both dynamic and ephemeral storage requirements across multiple cloud environments. This includes comprehensive support for a wide range of cloud regions and instances, ensuring flexibility and scalability.

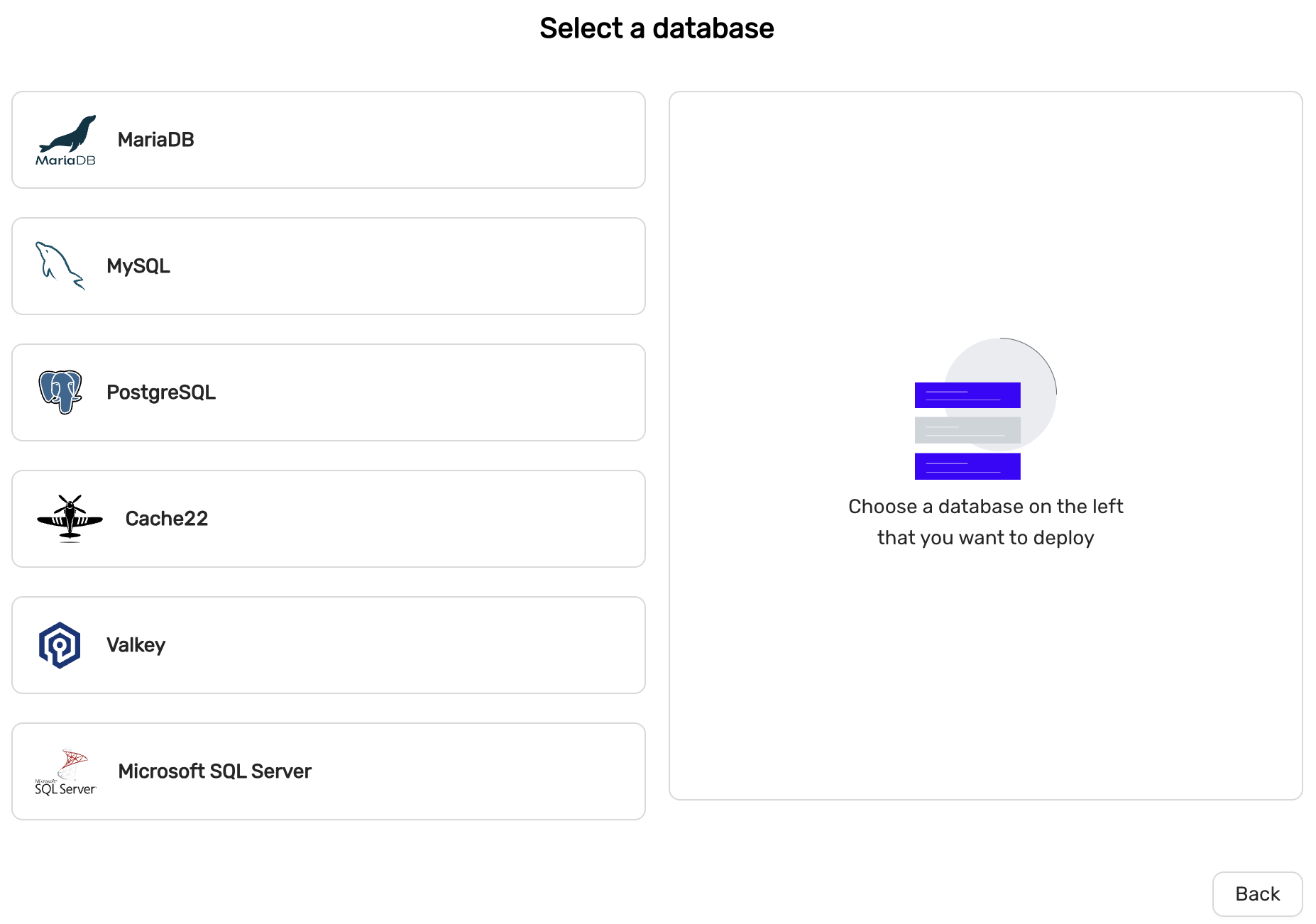

Database Support

Our platform is compatible with a diverse array of database types, including:

- MariaDB

- MySQL

- PostgreSQL

- Cache 22 (deprecated and will be removed in a future release. It is replaced with Valkey)

- Valkey

- Microsoft SQL Server

Node Configurations

We provide support for various node configurations to meet your database needs:

- Replica nodes for MariaDB, MySQL, PostgreSQL, Redis, and Microsoft SQL Server (Single server and Always-On)

- Galera clusters for MariaDB and MySQL

Monitoring and Management

Our platform features advanced monitoring capabilities, offering detailed performance analysis through extensive charts. It enables efficient management of nodes, including:

- Datastore scaling

- Volume scaling

- Promote replica to primary

- Node repair mechanisms

User and Database Administration

We offer sophisticated tools for managing database users and their permissions, ensuring secure access control.

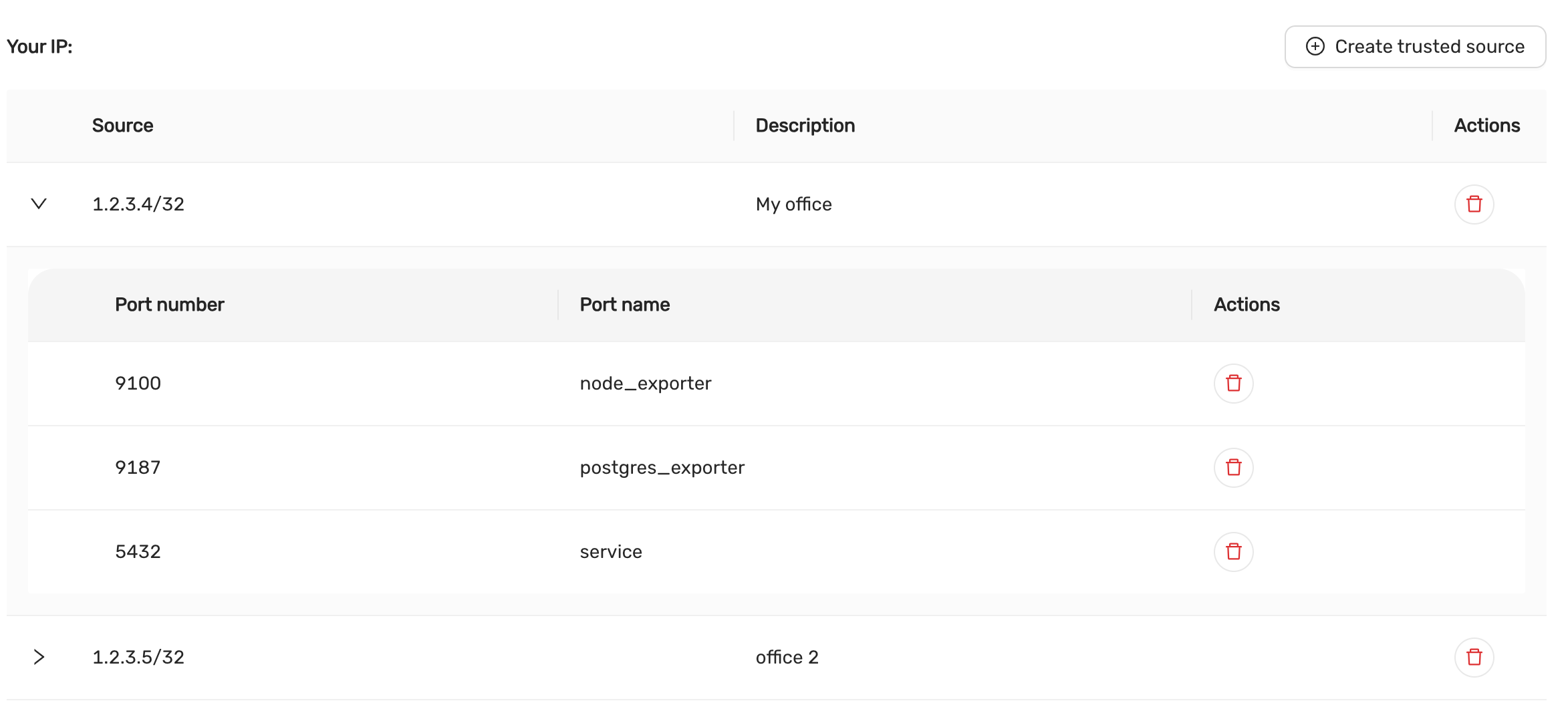

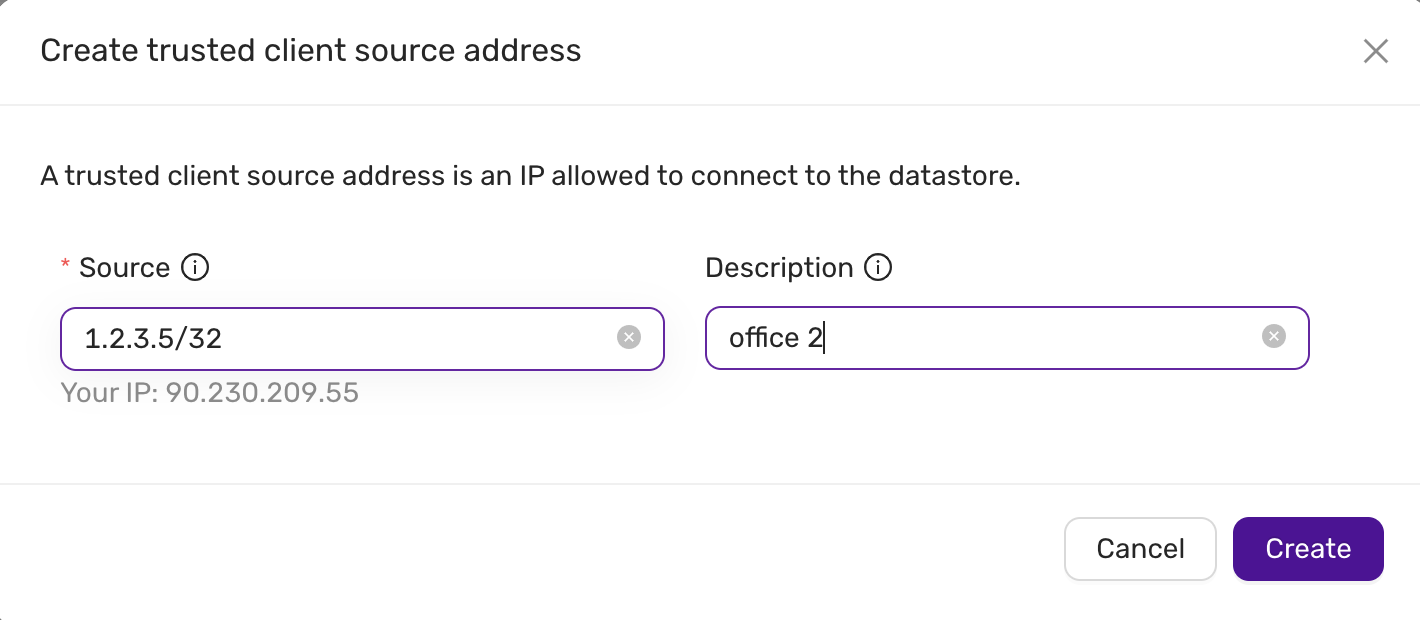

Network Security

Our firewall configuration options are designed to enhance network security, providing robust protection for your data.

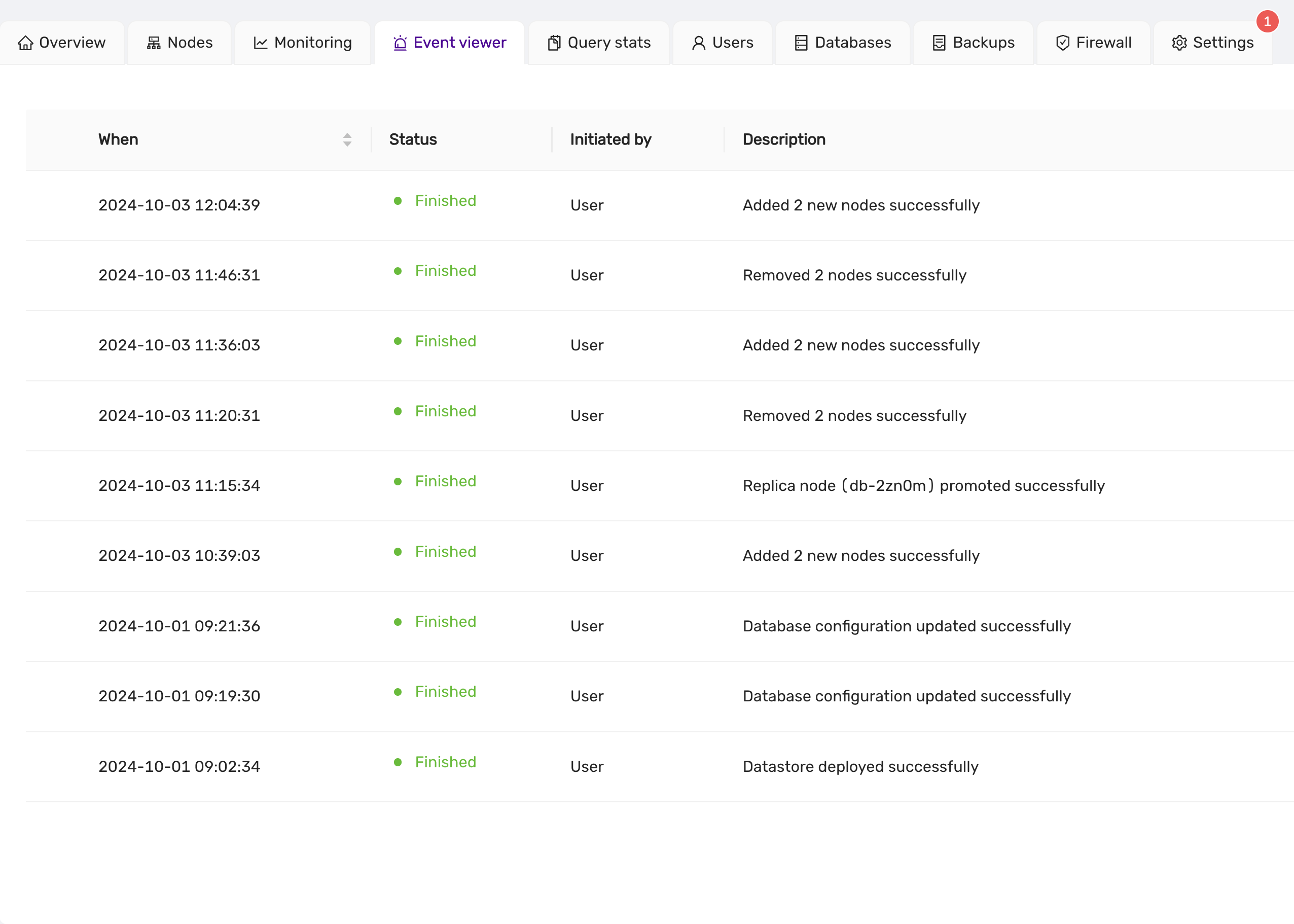

Event Logging

The event viewer tracks and displays a comprehensive history of operations performed on the datastore, enhancing transparency and accountability.

Backup and Recovery

Our backup solutions include:

- Incremental and full backup options for comprehensive data protection

- Point-in-time recovery capabilities

- Automated cloud backup uploads with customizable retention periods

- Restoration from separate volumes to optimize datastore space utilization

Customizable Settings

We offer customizable settings for various operational database parameters, allowing for tailored database management.

Account Management

Our platform facilitates user account creation and management, streamlining the login and registration process.

Billing and Payments

Our billing and payment processing tools are designed to simplify financial transactions, including the management of payments and invoices.

Feature Matrix

Each datastore has different features and are suitable for different use cases. Below is a feature matrix showing what operational feature is supported on each datastore:

| MySQL | MariaDb | PostgreSQL | Valkey/Cache22 | MS SQLServer (single server) |

MS SQLServer (Always-on, std license) |

|

|---|---|---|---|---|---|---|

| Scale nodes | Yes | Yes | Yes | Yes | No | No |

| Scale volume | Yes | Yes | Yes | Yes | Yes | Yes |

| Upgrade | Yes | Yes | Yes | Yes | Yes | Yes |

| Promote replica | Yes | Yes | Yes | Yes | Yes | Yes |

| Configuration management | Yes | Yes | Yes | Yes | No | No |

| Backup to S3 | Yes | Yes | Yes | Yes | Yes | Yes |

| Restore | Yes | Yes | Yes | Yes | Yes | Yes |

| PITR | Yes | Yes | Yes | No | No | No |

| User management† | Yes | Yes | Yes | Yes | Yes | Yes |

| Create databases | Yes | Yes | Yes | Yes | No | No |

| Query monitoring | Yes | Yes | Yes | Yes | No | Yes |

| Database growth (Capacity planning) |

Yes | Yes | Yes | Yes | No | Yes |

† : User management features and scope depends on the underlying datastore. There are datastore specific limitations.

4.3 - FAQ

Does CCX provide a High-availability feature

Yes

Can I change the database configuration?

This is a managed service.

Does CCX support multiple AZs.

CCX supports multiple AZs if the Cloud Provider does.

Can write only instances and read only replicas be created?

Yes, but the write-only instances is read-write. It allows both reads and writes.

Does support use a Proxy or Load Balancer (fora example, if there are 2 or more Read Replica instances, then Read can be load balanced across multiple instances).

DNS is used to facilitate this. However, the user can create his own loadbalancer (such as HAProxy or ProxySQL) and connect to the database service. A load balancer should be placed as close as possible to the user’s application. We recommend that the end-user manages the loadbalancer.

Are backups automatic?

Yes, backups are created automatically. The user can set the frequency.

Is there an auto-upgrade SQL version feature, for minor and major updates?

Only minor upgrades. Major upgrades are not supported in an online operation. See product documentation about upgrades (Life-cycle management)

Can it be backed up externally, for example dumping data?

Yes. See product documentation.

Can external data be restored, if yes, how?

See product documentation.

4.4 - Managed Service

CCX is provided as a managed service for your database engine in the cloud(s) you select. This document aims to outline the responsibilities of CCX and what rests with you, as the user.

CCX does:

- Deploy, secure and configure database engines onto virtual machines (VMs) in your chosen cloud

- Allow you to:

- Configure firewall rules for access

- Create new databases and users

- Configure replication for the chosen topology

- Ensure connectivity between nodes

- View metrics for each VM and the datastore

- View query statistics for your service

- Configure and set a backup schedule for your service

- Configure a maintenance window that allows CCX to perform maintenance and provide security patches

- Scale your service horizontally (up and down)

- Scale your storage vertically (up)

- Manage and monitor the database to ensure connectivity

The primary responsibility of CCX is to ensure that your datastore is running at all times, reacting to scenarios to ensure this is true. CCX does not access your data or control how you use the databases within your datastore.

In order to achieve this, CCX does not:

- Provide SSH or other ways to access the underlying infrastructure

- Allow superuser access to the managed services

- Allow the modification of settings that are not suitable for production use

- Allow the installation of untrusted extensions or code

If you do have specific requirements, such as:

- Temporary modification of configuration

- Feature requests for extensions or different versions of the databases

Please reach out to Support and we will work with you to find a solution.

4.5 - Overview

Elastx DBaaS automatically ensures your databases are reliable, secure, and scalable so that your business continues to run without disruption. It provides full compatibility with the source database engines while reducing operations costs by automating database provisioning and other time-consuming tasks.

Easy high availability and disaster protection by configuring replication, clusters and backups to protect your data. Backups and multi-node datastores are disaster protected as they are running over multiple availability zones which in our case are geographically separated data centers. Automatic failover makes your database highly available. Your data is encrypted at rest, and ISO 27001, 27017, 27018 as well as 14001 compliant.

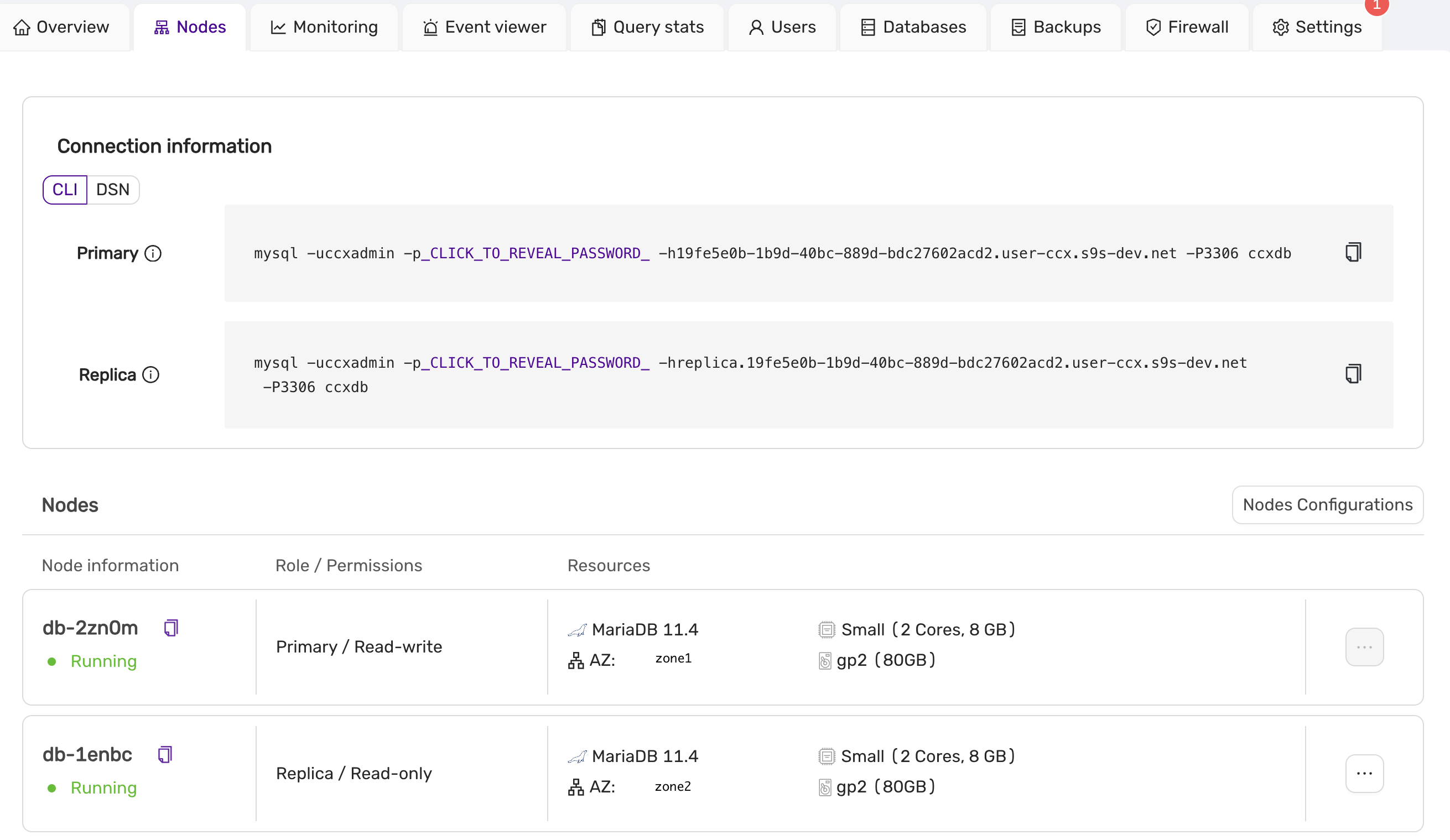

A Datastore is a database instance with one or more nodes. In the Datastore you can have one or more databases. A datastore can be created with a single node, three nodes in a active/active cluster or a primary node with one or two read only replicas.

You can manage the Datastores with the web-UI. Authentication to the web-UI is done with the Elastx Identity Provider where MFA with TOTP or Yubikey is required. All datastores owned by the organization will be visible for all users with access to that organization.

To access the datastores you need database user credentials which you get and manage for each individual database. You also need to configure the Datastore firewall to allow access from specific IP addresses. The Connection assistant will help you to get the connection string for common programming languages.

In the web-UI you get graphs on key performance metrics on the database and the nodes that will help you to manage capacity and performance. You can scale the Datastore by adding or removing nodes and also to change the size of a node by replacing a node with a different flavor. Contact Elastx support if you need to increase the storage capacity and we will help you. Please note that ephemeral storage can’t be increased unless you change node flavor.

4.6 - Reference

4.6.1 - Datastore Statuses

When you deploy a Datastore, you will see a Status reported in the CCX Dashboard. This article outlines the statuses and what they mean.

| Status | Description | Action Required? |

|---|---|---|

| Deploying | Your Datastore is being configured and deployed into the Cloud you specified |

No |

| Available | Your Datastore is up and running with no reported issues |

No |

| Unreachable | Your Datastore might be running but CCX is not able to communicate directly with one or more Node(s). |

Verify you can access the Datastore and contact Support |

| Maintenance | Your Datastore is applying critical security updates during the specific maintenance window. |

No |

| Deleting | You have requested the deletion of your Datastore and it is currently being processed. |

No, unless this deletion was not requested by you or the Datastore has been in this state for more than 2 hours |

| Deleted | Your Datastore has been deleted. |

No |

| Failed | Your Datastore has failed, this can be a hardware or software fault |

Contact Support |

4.6.2 - Glossary

| Term | Definition | AKA | Area |

|---|---|---|---|

| Datastore | A deployment of a Database on CCX. A Datastore has a unique ID, it is essential to include this when contacting Support with issues or queries. |

Service | Deployment |

| Node | A Virtual Machine (VM) in a Cloud that makes up a Datastore. A Node consists of:- CPU - the number of cores - RAM - the amount (GB) of memory - Storage - the amount (GB/TB) of persistent storage |

Virtual Machine (VM) Node Server Instance |

Compute |

| Storage | The amount of persistent data for your Datastore.Storage comes in multiple different formats and not all are supported by all Clouds. There are cost and performance considerations when choosing the storage. |

Storage | |

| Volumes | The types of Storage available. Typically, this is measured in IOPS and the higher IOPS has increased performance with an increased cost per GB |

||

| Database | The engine deployed and configured for your Datastore. To see these options, check Supported Databases |

Database Management System (DBMS) | General |

| Virtual Private Cloud (VPC) | A private network configured that is unique to your account and ensures that any traffic between your Datastore does not go over the Public Internet |

Private Network | Networking |

| Cloud | An infrastructure provider where Datastores can be deployed |

Deployment | |

| Region | A geographic region with one or more Datacentres owned or operated by a Cloud. A Datastore is deployed into a single Region |

Deployment | |

| Availability Zone (AZ) | A Region can have one or more Availability Zones. More than one Availability Zones allows one Datacentre to go down without bringing down all of the Nodes in your Datastore.CCX will automatically attempt to deploy each Node in a Datastore into a different AZ (if the Region supports it) |

Deployment | |

| Replication | A method of exchanging data between two Nodes that ensures they stay in sync and allows one Node to fail without bringing your Datastore down |

Operations | |

| Primary / Replica | The recommended deployment for a Production Datastore with 2 or more Nodes, one acting as the Primary and the other(s) acting as the Replica |

Highly Available High Availability |

Operations |

| Multi-Primary | Multiple Nodes deployed with the same role, all of them acting as the Primary. This topology is not supported by all Databases |

Clustered | Operations |

| Status | The last known status of your Datastore. For details of the possible statuses, see here |

State | Operations |

| Maintenance | The application of critical security updates to your Datastore. These are applied in your Maintenance Window which can be configured per Datastore. |

Operations | |

| Monitoring | This is the metrics of the hardware and software for your Datastore. These can be accessed in the CCX Dashboard and can be shown per Node. For details of the metrics available, see here. |

Observability |

4.6.3 - Notifications

CCX notifies users by email in case of certain events. Recipients can be configured on the Datastore Settings page or in the Datastore wizard.

| Alert | Description | Action Required? |

|---|---|---|

| Cluster Upgrade | Cluster is being upgraded | No |

| Cluster Storage Resized | Cluster storage has been automatically resized from size to new_size. | No |

| HostAutoScaleDiskSpaceReached | The cluster is running out of storage and will be automatically scaled. | No |

4.6.4 - Observability

4.6.4.1 - Metrics

4.6.4.1.1 - Introduction

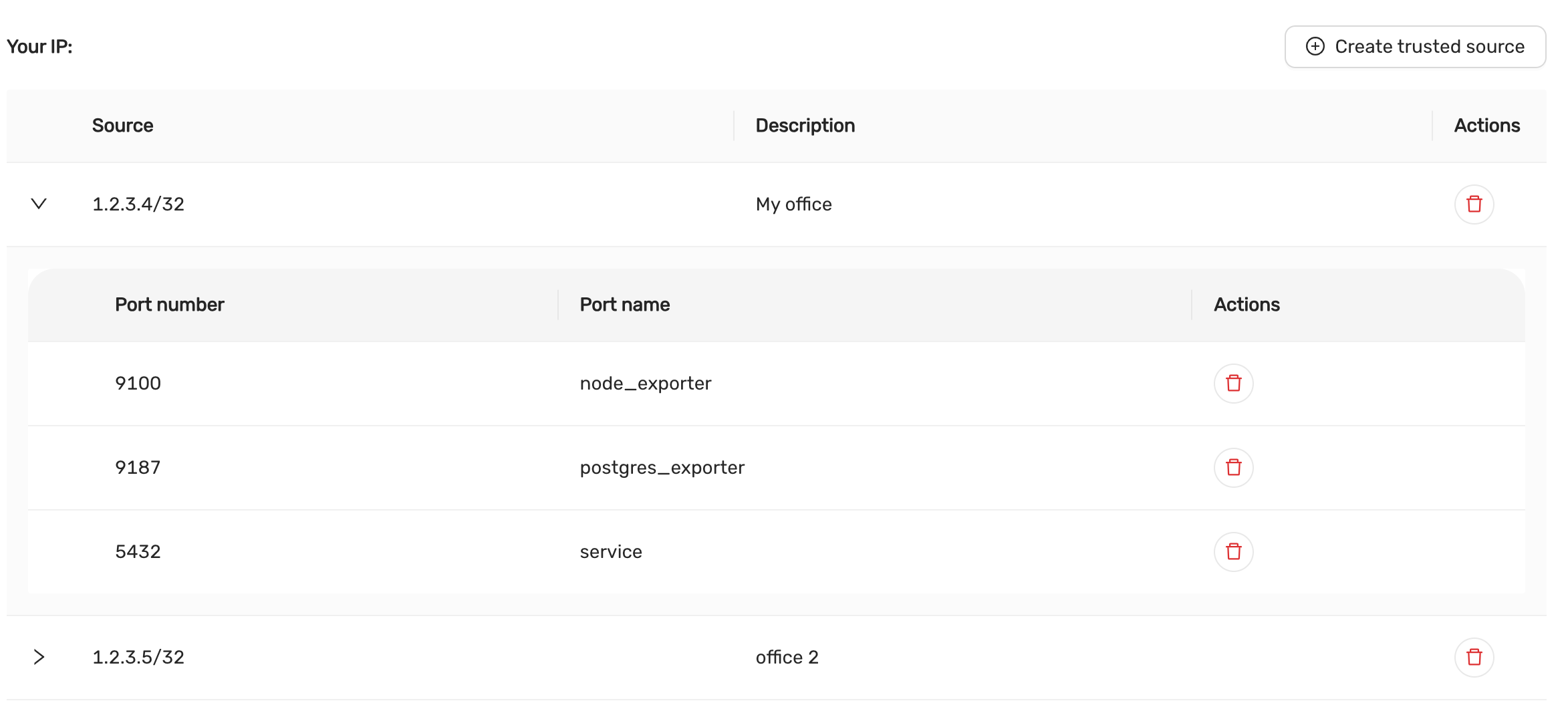

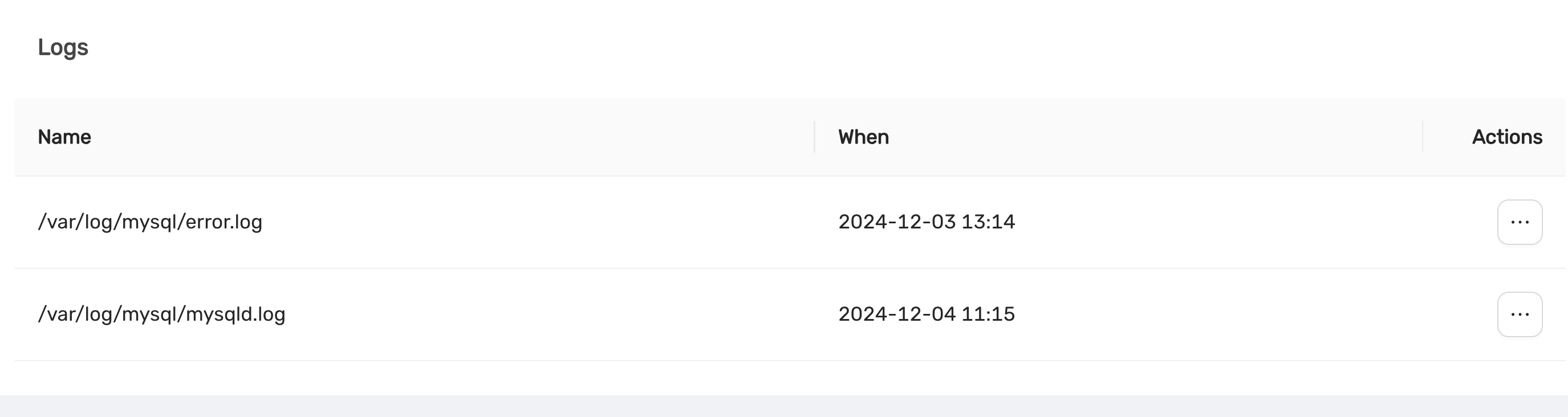

CCX uses Prometheus and exporters for monitoring. The monitoring data is exposed though the exports from each node. This is a controlled under the Firewall tab in the CCX UI.

4.6.4.1.2 - MySQL And MariaDB

- MySQL / MariaDB

- Handler Stats

- Statistics for the handler. Shown as:

- Read Rnd

- Count of requests to read a row based on a fixed position

- Read Rnd Next

- Count of requests to read a subsequent row in a data file

- Read Next

- Count of requests to read the next row in key order

- Read Last

- Count of requests to read the last key in an index

- Read Prev

- Count of requests to read the previous row in key order

- Read First

- Count of requests to read a row based on an index key value

- Read Key

- Count of requests to read the last key in an index

- Update

- Count of requests to update a row

- Write

- Count of requests to insert to a table

- Read Rnd

- Statistics for the handler. Shown as:

- Handler Transaction Stats

- Database Connections

- Count of connections to the database. Shown as:

- Thread Connected

- Count of clients connected to the database

- Max Connections

- Count of max connections allowed to the database

- Max Used Connections

- Maximum number of connections in use

- Aborted Clients

- Number of connections aborted due to client not closing

- Aborted Connects

- Number of failed connection attempts

- Connections

- Number of connection attempts

- Thread Connected

- Count of connections to the database. Shown as:

- Queries

- Count of queries executed

- Scan Operations

- Count of operations for the operations: SELECT, UPDATE and DELETE

- Table Locking

- Count of table locks. Shown as:

- Table locks immediate

- Count of table locks that could be granted immediately

- Table locks waited

- Count of locks that had to be waited due to existing locks or another reason

- Table locks immediate

- Count of table locks. Shown as:

- Temporary Tables

- Count of temporary tables created. Shown as:

- Temporary tables

- Count of temporary tables created

- Temporary tables on Disk

- Count of temporary tables created on disk rather than in memory

- Temporary tables

- Count of temporary tables created. Shown as:

- Sorting

- Aborted Connections

- Count of failed or aborted connections to the database. Shown as:

- Aborted Clients

- Number of connections aborted due to client not closing

- Aborted Connects

- Number of failed connection attempts

- Access Denied Errors

- Count of unsuccessful authentication attempts

- Aborted Clients

- Memory Utilisation

- Count of failed or aborted connections to the database. Shown as:

- Handler Stats

4.6.4.1.3 - PostgreSQL

- PostgreSQL

- SELECT (fetched)

- Count of rows fetched by queries to the database

- SELECT (returned)

- Count of rows returned by queries to the database

- INSERT

- Count of rows inserted to the database

- UPDATE

- Count of rows updated in the database

- DELETE

- Count of rows deleted in the database

- Active Sessions

- Count of currently running queries

- Idle Sessions

- Count of connections to the database that are not currently in use

- Idle Sessions in transaction

- Count of connections that have begun a transaction but not yet completed while not actively doing work

- Idle Sessions in transaction (aborted)

- Count of connections that have begun a transaction but did not complete and were forcefully aborted before they could complete

- Lock tables

- Active locks on the database

- Checkpoints requested and timed

- Count of checkpoints requested and scheduled

- Checkpoint sync time

- Time synchronising checkpoint files to disk

- Checkpoint write time

- Time to write checkpoints to disk

- SELECT (fetched)

4.6.4.1.4 - Redis

- Redis

- Blocked Clients

- Clients blocked while waiting on a command to execute

- Memory Used

- Amount of memory used by Redis (in bytes)

- Connected Clients

- Count of clients connected to Redis

- Redis commands per second

- Count of commands processed per second

- Total keys

- The total count of all keys stored by Redis

- Replica Lag

- The lag (in seconds) between the primary and the replica(s)

- Blocked Clients

4.6.4.1.5 - System

- System - Hardware level metrics for your Datastore

- Load Average

- The overall load on your Datastore within the preset period

- CPU Usage

- The breakdown of CPU utilisation for your Datastore, including both

SystemandUserprocesses

- The breakdown of CPU utilisation for your Datastore, including both

- RAM Usage

- The amount of RAM (in Gigabytes) used and available within the preset period

- Network Usage

- The amount of data (in Kilobits or Megabits per second) received and sent within the preset period

- Disk Usage

- The total amount of storage used (in Gigabytes) and what is available within the preset period

- Disk IO

- The input and output utilisation for your disk within the preset period

- Disk IOPS

- The number of read and write operations within the preset period

- Disk Throughput

- The amount of data (in Megabytes per second) that is being read from, or written to, the disk within the preset period

- Load Average

4.6.4.1.6 - Valkey

- Valkey

- Blocked Clients

- Clients blocked while waiting on a command to execute

- Memory Used

- Amount of memory used by Valkey (in bytes)

- Connected Clients

- Count of clients connected to Valkey

- Valkey commands per second

- Count of commands processed per second

- Total keys

- The total count of all keys stored by Valkey

- Replica Lag

- The lag (in seconds) between the primary and the replica(s)

- Blocked Clients

4.6.5 - Products

4.6.5.1 - MariaDb

4.6.5.1.1 - Backup

Mariabackup is used to create backups.

CCX backups the Primary server. In multi-primary setups the node with the highest wsrep_local_index is elected.

Backups are streamed directly to S3 staroge.

Mariabackup blocks DDL operations during the backup using the --lock-ddl flag.

Any attempt to CREATE, ALTER, DROP, TRUNCATE a table during backup creation will be locked with the status Waiting for backup lock (see SHOW FULL PROCESSLIST).

In this case, wait for the backup to finish and, perform the operation later.

Also see the section ‘Schedule’.

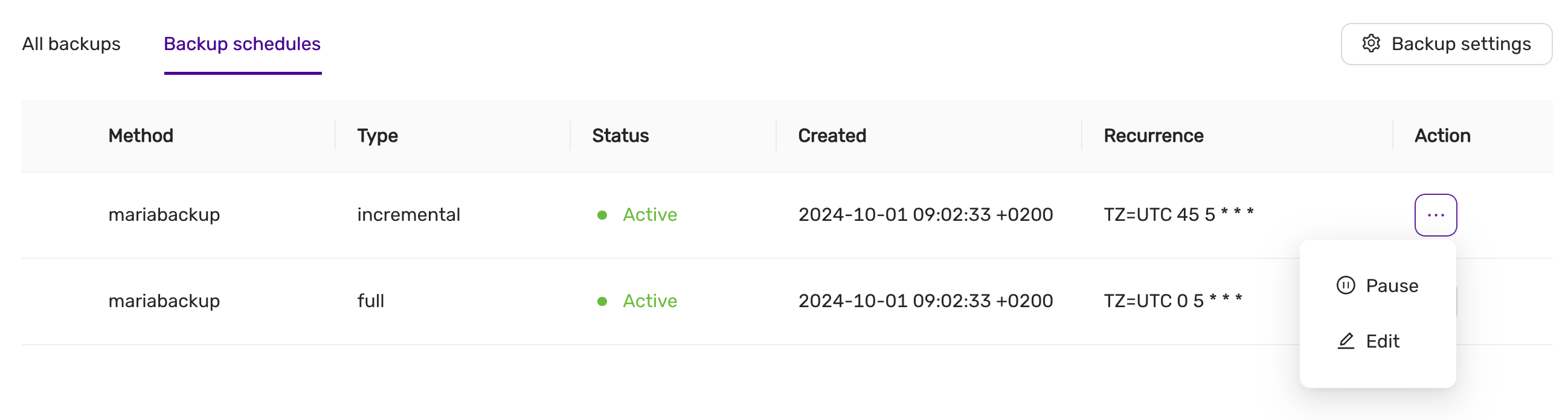

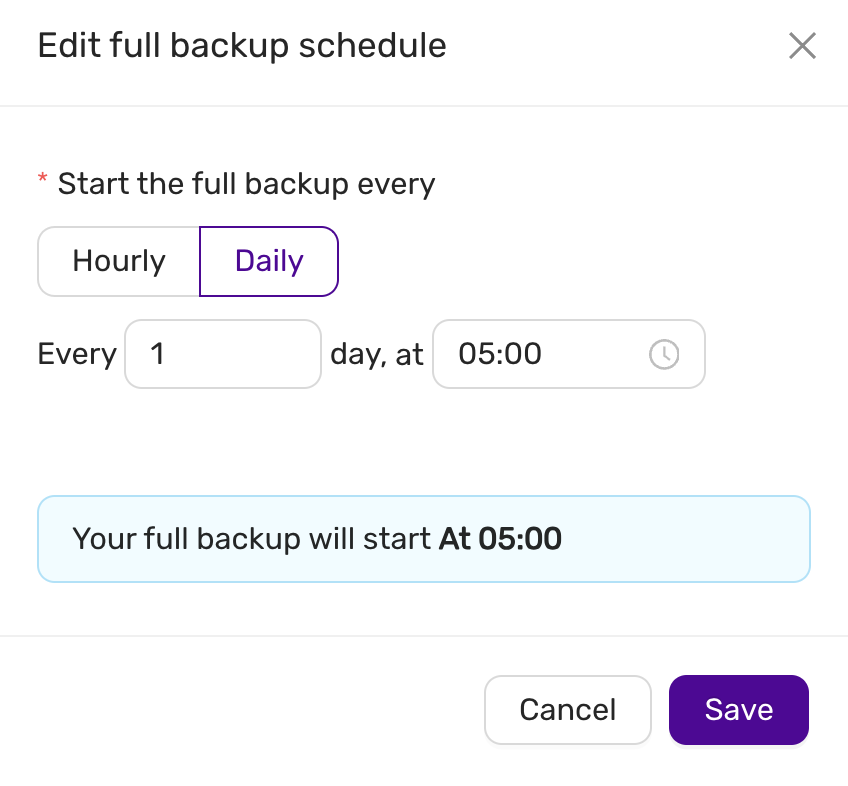

Schedule

The backup schedule can be tuned and backups can be paused

4.6.5.1.2 - Configuration

max_connections

- 75 connections / GB of RAM.

- Example: 4GB of RAM yields 300 connections.

- This setting cannot be changed as it affects system stability.

InnoDB settings

- These setting cannot be changed as it affects system stability.

innodb_buffer_pool_size

- 50% of RAM if total RAM is > 4GB

- 25% of RAM if total RAM is <= 4GB

innodb_log_file_size

- 1024 MB if innodb_buffer_pool_size >= 8192MB

- 512 MB if innodb_buffer_pool_size < 8192MB

innodb_buffer_pool_instances

- 8

InnoDB options

| variable_name | variable_value |

|---|---|

| innodb_buffer_pool_size | Depends on instance size |

| innodb_flush_log_at_trx_commit | 2 |

| innodb_file_per_table | 1 |

| innodb_data_file_path | Depends on instance |

| innodb_read_io_threads | 4 |

| innodb_write_io_threads | 4 |

| innodb_doublewrite | 1 |

| innodb_buffer_pool_instances | Depends on instance size |

| innodb_redo_log_capacity | 8G |

| innodb_thread_concurrency | 0 |

| innodb_flush_method | O_DIRECT |

| innodb_autoinc_lock_mode | 2 |

| innodb_stats_on_metadata | 0 |

| default_storage_engine | innodb |

General options

| variable_name | variable_value |

|---|---|

| tmp_table_size | 64M |

| max_heap_table_size | 64M |

| max_allowed_packet | 1G |

| sort_buffer_size | 256K |

| read_buffer_size | 256K |

| read_rnd_buffer_size | 512K |

| memlock | 0 |

| sysdate_is_now | 1 |

| max_connections | Depends on instance size |

| thread_cache_size | 512 |

| table_open_cache | 4000 |

| table_open_cache_instances | 16 |

| lower_case_table_names | 0 |

Storage

Recommended storage size

- We recommend a maximum of 100GB storage per GB of RAM.

- Example: 4GB of RAM yields 400GB of storage.

- The recommendation is not enforced by the CCX platform.

4.6.5.1.3 - Importing Data

This procedure describes how to import data to a MariaDB datastore located in CCX.

- The MariaDB Datastore on CCX is denoted as the ‘replica’

- The source of the data is denoted as the ‘source’

note:

If you do not want to setup replication, then you can chose to only apply the sections:

- Create a database dump file

- Apply the dumpfile on the replica

Limitations of MariaDB

MariaDB does not offer as fine grained control over privileges as MySQL. Nor does it have the same level of replication features.

The following properties must be respected in order to comply with the SLA:

- There must be no user management happening on the source, while the data is imported and the replication link is active. This is avoid corruption of the mysql database and possibly other system databases.

- It is recommended to set

binlog-ignore-dbon the source to ‘mysql, performance_schema, and sys’ during the data import/sync process.

Preparations

Ensure that the source is configured to act as a replication source.

- Binary logging is enabled.

server_idis set to non 0.

Also, prepare the replica with the databases you wish to replicate from the source to the master:

- Using the CCX UI, go to Databases, and issue a Create Database for each database that will be replicated.

Ensure the CCX Firewall is updated:

- Add the replication source as a Trusted Source in the Firewall section of the CCX UI.

Create a replication user on the source

Create a replication user with sufficient privileges on the source:

CREATE USER 'repluser'@'%' IDENTIFIED BY '<SECRET>';

GRANT REPLICATION SLAVE ON *.* TO 'repluser'@'%';

Prepare the replica to replicate from the source

The replica must be instrucuted to replicate from the source.

Make sure to change <SOURCE_IP>, <SOURCE_PORT>, and <SECRET>.

Run the following on the source:

CHANGE MASTER TO MASTER_HOST=<SOURCE_IP>, MASTER_PORT=<SOURCE_PORT>, MASTER_USER='repluser', MASTER_PASSWORD='<SECRET>', MASTER_SSL=1;

Create a database dump file of the source

The database dump contains the data that you wish to import into the replica. Only partial dumps are possible. The dump must not contains any mysql or other system databases.

danger: The dump must not contains any mysql or other system databases.

On the source, issue the following command. Change ADMIN, SECRET and DATABASES:

mysqldump -uADMIN -p<SECRET> --master-data --single-transaction --triggers --routines --events --databases DATABASES > dump.sql`

If your database dump contains SPROCs, triggers or events, then you must replace DEFINER. This may take a while:

sed 's/\sDEFINER=`[^`]*`@`[^`]*`//g' -i dump.sql

Apply the dumpfile on the replica

cat dump.sql | mysql -uccxadmin -p -h<REPLICA_PRIMARY>

Start the replica

On the replica do:

START SLAVE

followed by

SHOW SLAVE STATUS;

And verify that:

Slave_IO_State: Waiting for source to send event

..

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

When the migration is ready

STOP SLAVE;

RESET SLAVE ALL;

Troubleshooting

If the replication fails to start then verify:

- All the steps above has been followed.

- Ensure that the replication source is added as a Trusted Source in the Firewall section of the CCX UI.

- Ensure that you have the correct IP/FQDN of the replication source.

- Ensure that users are created correctly and using the correct password.

- Ensure that the dump is fresh.

4.6.5.1.4 - Limitations

Every product has limitations. Here is a list MariaDB limitations:

Permissions

The privilege system is not as flexible as in MySQL.

The ‘ccxadmin’ user has the following privileges:

Global / all databases (.):

- CREATE USER, REPLICATION SLAVE, REPLICATION SLAVE ADMIN, SLAVE MONITOR

On databases created from CCX, the admin user can create new users and grant privileges:

- ALL PRIVILEGES WITH GRANT OPTION

This means that users can only create databases from the CCX UI. Once the database has been created from the CCX UI, then the ccxadmin user can create users and grant user privileges on the database using MariaDB CLI.

4.6.5.1.5 - Overview

CCX supports two types of MariaDB clustering:

- MariaDB Replication (Primary-replica configuration)x

- MariaDB Cluster (Multi-primary configuration)

For general purpose applications we recommend using MariaDB Replication, and we only recommend to use MariaDB Cluster if you are migrating from an existing application that uses MariaDB Cluster.

If you are new to MariaDB Cluster we stronly recommend to read about the MariaDB Cluster 10.x limitations and MariaDB Cluster Overview to understand if your application can benefit from MariaDB Cluster.

MariaDB Replication uses the standard asynchronous replication based on GTIDs.

Scaling

Storage and nodes can be scaled online.

Nodes (horizonal)

- The maximum number of database nodes in a datastore is 5.

- Multi-primary configuration must contain an odd number of nodes (1, 3 and 5).

Nodes (vertical)

A node cannot be scaled vertically currently. To scale to large instance type, then a larger instance must be added and then remove the unwanted smaller instances.

Storage

- Maximum size depends on the service provider and instance size

- Volume type cannot currently be changed

Further Reading

4.6.5.1.6 - Restore

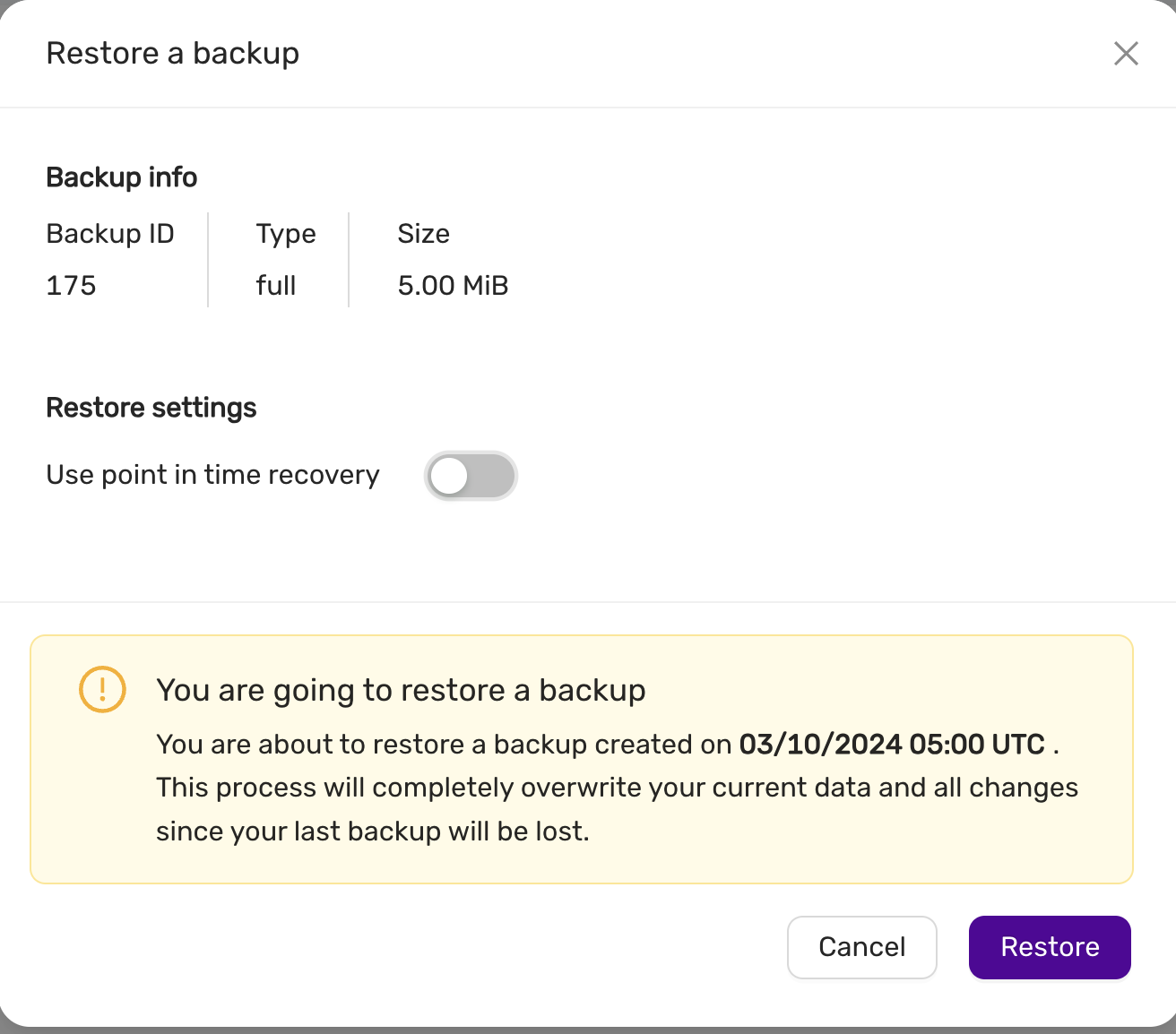

There are two options to restore a backup:

- Restore a backup on the existing datastore

- Restore a backup on a new datastore

Please note that restoring a backup may be a long running process.

This option allows to restore a backup with point in time recovery. The WAL logs are replayed until the desired PITR.

Warning! Running several restores may change the timelines.

This option allows to restore a backup on a new datastore. This option does not currently support PITR.

4.6.5.1.7 - TLS Connection

SSL Modes

CCX currently supports connections to MariaDB in two SSL modes:

REQUIRED: This mode requires an SSL connection. If a client attempts to connect without SSL, the server rejects the connection.VERIFY_CA: This mode requires an SSL connection and the server must verify the client’s certificate against the CA certificates that it has.

CA Certificate

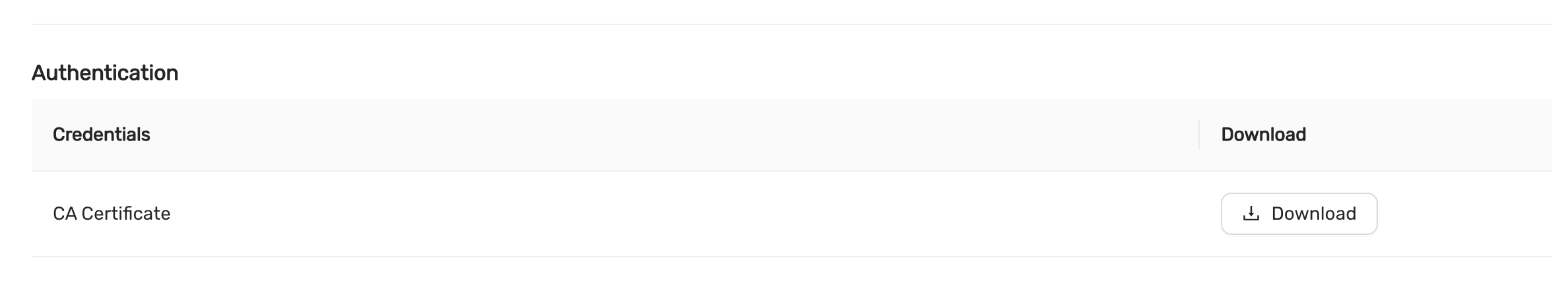

The Certificate Authority (CA) certificate required for VERIFY_CA mode can be downloaded from your datastore on CCX using an API call or through the user interface on page https://{your_ccx_domain}/projects/default/data-stores/{datastore_id}/settings.

This certificate is used for the VERIFY_CA SSL mode.

Example Commands

Here are example commands for connecting to the MySQL server using the two supported SSL modes:

-

REQUIREDmode:mysql --ssl-mode=REQUIRED -u username -p -h hostname -

VERIFY_CAmode:mysql --ssl-mode=VERIFY_CA --ssl-ca=ca.pem -u username -p -h hostname

require_secure_transport

This is a MariaDB setting that governs if connections to the datastore are required to use SSL. You can change this setting in CCX in Settings -> DB Parameters

| Scenario | Server Parameter Settings | Description |

|---|---|---|

| Disable SSL enforcement | require_secure_transport = OFF |

This is the default to support legacy applications. If your legacy application doesn’t support encrypted connections, you can disable enforcement of encrypted connections by setting require_secure_transport=OFF. However, connections are encrypted unless SSL is disabled on the client. See examples |

| Enforce SSL | require_secure_transport = ON |

This is the recommended configuratuion. |

Examples

ssl-mode=DISABLED and require_secure_transport=OFF

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=disabled

...

mysql> \s

--------------

...

Connection id: 52

Current database: ccxdb

Current user: ccxadmin@...

*SSL: Not in use*

Current pager: stdout

...

ssl-mode=PREFERRED and require_secure_transport=OFF

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=preferred

...

mysql> \s

--------------

...

Connection id: 52

Current database: ccxdb

Current user: ccxadmin@...

SSL: Cipher in use is TLS_AES_256_GCM_SHA384

Current pager: stdout

...

ssl-mode=DISABLED and require_secure_transport=ON

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=disabled

mysql: [Warning] Using a password on the command line interface can be insecure.

ERROR 3159 (08004): Connections using insecure transport are prohibited while --require_secure_transport=ON.

ssl-mode=PREFERRED|REQUIRED and require_secure_transport=ON

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=preferred|required

mysql> \s

--------------

...

Connection id: 52

Current database: ccxdb

Current user: ccxadmin@...

SSL: Cipher in use is TLS_AES_256_GCM_SHA384

Current pager: stdout

...

tls_version

The tls_version is set to the following by default:

| Variable_name | Value |

|---|---|

| tls_version | TLSv1.2,TLSv1.3 |

4.6.5.2 - MSSQLServer

4.6.5.2.1 - Configurations

Important default values

max_connections

- SQL Server has no direct “max connection per GB of RAM” rule. The actual number of user connections allowed depends on the version of SQL Server that you are using, and also the limits of your application(s), and hardware.

- SQL Server allows a maximum of 32,767 user connections.

- User connections is a dynamic (self-configuring) option, SQL Server adjusts the maximum number of user connections automatically as needed, up to the maximum value allowable.

- In most cases, you do not have to change the value for this option. The default is 0, which means that the maximum (32,767) user connections are allowed.

- To determine the maximum number of user connections that your system allows, you can execute

sp_configureor query thesys.configurationcatalog view. - For more info: https://learn.microsoft.com/en-us/sql/database-engine/configure-windows/configure-the-user-connections-server-configuration-option?view=sql-server-ver16&viewFallbackFrom=sql-server-ver16.

4.6.5.2.2 - Limitations

Every product has limitations. Below is a list of Microsoft SQL Server limitations:

License

- The standard license is applied.

Configurations

- Single node (no High Availability)

- Always On (2 nodes, asynchronous commit mode, High Availability)

Always On-specific limitations

- Refer to the Microsoft standard license for a complete list of limitations.

- Only asynchronous commit mode is currently supported.

- The

ccxdbis currently the only supported Always On enabled database. - Scaling is not supported as the standard license does not permit more than two nodes.

User-created databases (not Always On) are not transferred to the replica

- In the Always On configuration, only the

ccxdbis replicated. - Data loss may occur for other user-created databases, as they are not transferred to the replica during the add node process. Therefore, they may be lost if a failover, automatic repair, or any other life-cycle management event occurs.

4.6.5.2.3 - Overview

CCX supports two Microsoft SQLServer 2022 configurations:

- Single-node (No high-availability)

- Always-on, two nodes, async-commit mode (high-availability) in an primary-replica configuration.

The ‘standard’ license is applied.

Scaling

Scaling is not supported in SQLServer as of the standard license.

Storage

- Maximum size depends on the service provider and instance size

- Volume type cannot currently be changed

Further Reading

4.6.5.2.4 - User Management

CCX supports creating database users from the web interface.

The database user is created as follows:

CREATE LOGIN username WITH PASSWORD = 'SECRET', DEFAULT_DATABASE=[master], CHECK_EXPIRATION=OFF, CHECK_POLICY=OFF

ALTER SERVER ROLE [sysadmin] ADD MEMBER [username]

4.6.5.3 - MySQL

4.6.5.3.1 - Backup

Percona Xtrabackup is used to create backups.

CCX backups the Primary server. In multi-primary setups the node with the highest wsrep_local_index is elected.

Backups are streamed directly to S3 staroge.

Percona Xtrabackup blocks DDL operations during the backup using the --lock-ddl flag.

Any attempt to CREATE, ALTER, DROP, TRUNCATE a table during backup creation will be locked with the status Waiting for backup lock (see SHOW FULL PROCESSLIST).

In this case, wait for the backup to finish and, perform the operation later.

Also see the section ‘Schedule’.

Schedule

The backup schedule can be tuned and backups can be paused.

4.6.5.3.2 - Configuration

max_connections

- 75 connections / GB of RAM.

- Example: 4GB of RAM yields 300 connections.

- This setting cannot be changed as it affects system stability.

InnoDB settings

- These setting cannot be changed as it affects system stability.

innodb_buffer_pool_size

- 50% of RAM if total RAM is > 4GB

- 25% of RAM if total RAM is <= 4GB

innodb_log_file_size

- 1024 MB if innodb_buffer_pool_size >= 8192MB

- 512 MB if innodb_buffer_pool_size < 8192MB

innodb_buffer_pool_instances

- 8

InnoDB options

| variable_name | variable_value |

|---|---|

| innodb_buffer_pool_size | Depends on instance size |

| innodb_flush_log_at_trx_commit | 2 |

| innodb_file_per_table | 1 |

| innodb_data_file_path | Depends on instance size |

| innodb_read_io_threads | 4 |

| innodb_write_io_threads | 4 |

| innodb_doublewrite | 1 |

| innodb_buffer_pool_instances | Depends on instance size |

| innodb_redo_log_capacity | 8G |

| innodb_thread_concurrency | 0 |

| innodb_flush_method | O_DIRECT |

| innodb_autoinc_lock_mode | 2 |

| innodb_stats_on_metadata | 0 |

| default_storage_engine | innodb |

General options

| variable_name | variable_value |

|---|---|

| tmp_table_size | 64M |

| max_heap_table_size | 64M |

| max_allowed_packet | 1G |

| sort_buffer_size | 256K |

| read_buffer_size | 256K |

| read_rnd_buffer_size | 512K |

| memlock | 0 |

| sysdate_is_now | 1 |

| max_connections | Depends on instance size |

| thread_cache_size | 512 |

| table_open_cache | 4000 |

| table_open_cache_instances | 16 |

| lower_case_table_names | 0 |

Storage

Recommended storage size

- We recommend a maximum of 100GB storage per GB of RAM.

- Example: 4GB of RAM yields 400GB of storage.

- The recommendation is not enforced by the CCX platform.

4.6.5.3.3 - Importing Data

This procedure describes how to import data to a MySQL datastore located in CCX.

- The MySQL Datastore on CCX is denoted as the ‘replica’

- The source of the data is denoted as the ‘source’

note:

If you dont want to setup replication, then you can chose to only apply the sections:

- Create a database dump file

- Apply the dumpfile on the replica

Preparations

Ensure that the source is configured to act as a replication source:

- Binary logging is enabled.

server_idis set to non 0.

Ensure the CCX Firewall is updated:

- Add the replication source as a Trusted Source in the Firewall section of the CCX UI.

Create a replication user on the source

Create a replication user with sufficient privileges on the source:

CREATE USER 'repluser'@'%' IDENTIFIED BY '<SECRET>';

GRANT REPLICATION SLAVE ON *.* TO 'repluser'@'%';

Prepare the replica to replicate from the source

The replica must be instructed to replicate from the source:

Make sure to change <SOURCE_IP>, <SOURCE_PORT>, and <SECRET>.

CHANGE REPLICATION SOURCE TO SOURCE_HOST=<SOURCE_IP>, SOURCE_PORT=<SOURCE_PORT>, SOURCE_USER='repluser', SOURCE_PASSWORD='<SECRET>', SOURCE_SSL=1;

Create a replication filter on the replica

The replica filter prevents corruption of the datastore.

If the datastore’s system tables are corrupted using replication then the SLA is void and the datastore must be recreated.

CHANGE REPLICATION FILTER REPLICATE_IGNORE_DB=(mysql,sys, performance_schema);

Create a database dump file

The database dump contains the data that you wish to import into the replica. Only partial dumps are possible. The dump must not contains any mysql or other system databases.

On the source, issue the following command. Change USER, SECRET and DATABASES:

mysqldump --set-gtid-purged=OFF -uUSER -pSECRET --master-data --single-transaction --triggers --routines --events --databases DATABASES > dump.sql

Important! If your database dump contains SPROCs, triggers or events, then you must replace DEFINER:

sed 's/\sDEFINER=`[^`]*`@`[^`]*`//g' -i dump.sql

Apply the dumpfile on the replica

cat dump.sql | mysql -uccxadmin -p -h<REPLICA_PRIMARY>

Start the replica

On the replica do:

START REPLICA;

followed by

SHOW REPLICA STATUS;

And verify that:

Replica_IO_State: Waiting for source to send event

..

Replica_IO_Running: Yes

Replica_SQL_Running: Yes

When the migration is ready

STOP REPLICA;

RESET REPLICA ALL;

CHANGE REPLICATION FILTER REPLICATE_IGNORE_DB=();

Troubleshooting

If the replication fails to start then verify:

- All the steps above has been followed.

- Ensure that the replication source is added as a Trusted Source in the Firewall section of the CCX UI.

- Ensure that you have the correct IP/FQDN of the replication source.

- Ensure that users are created correctly and using the correct password.

- Ensure that the dump is fresh.

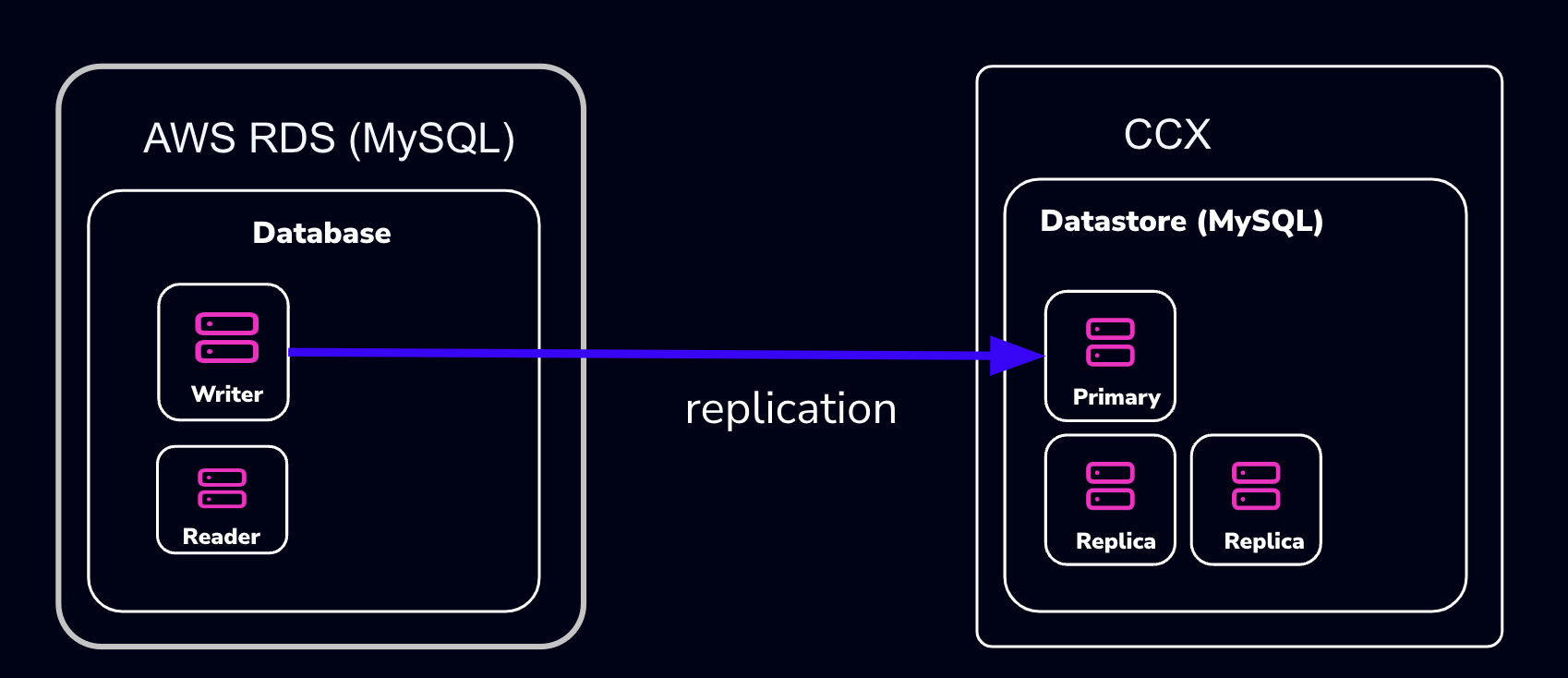

4.6.5.3.4 - Importing Data From AWS RDS

This procedure describes how to import data from Amazon RDS to a MySQL datastore located in CCX.

- The MySQL Datastore on CCX is referred to as the ‘CCX Primary’

- The RDS Source of the data is referred to as the ‘RDS Writer’

Schematically, this is what we will set up:

warning:

AWS RDS makes it intentionally difficult to migrate away from. Many procedures on the internet, as well as AWS’s own procedures, will not work.

The migration we suggest here (and is the only one we know works) requires that the RDS Writer instance be blocked for writes until a mysqldump has been completed. However, AWS RDS blocks operations such as

FLUSH TABLES WITH READ LOCK:mysqldump: Couldn't execute 'FLUSH TABLES WITH READ LOCK': Access denied for user 'admin'@'%' (using password: YES) (1045)Therefore, the actual applications must be blocked from writing.

Also, some procedures on the internet suggest creating a read-replica. This will not work either, as the AWS RDS Read-replica is crippled and lacks GTID support.

note:

If you don’t want to set up replication, you can choose to only apply the following sections:

- Create a database dump file of the RDS Writer

- Apply the dump file on the CCX replica

Also, practice this a few times before you actually do the migration.

Preparations

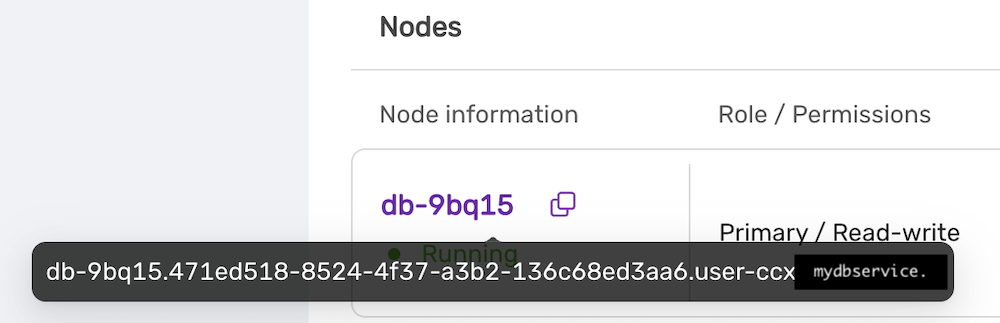

- Create a datastore on CCX. Note that you can also replicate from MySQL 8.0 to MySQL 8.4.

- Get the endpoint of the CCX Primary (under the Nodes section).

The endpoint in our case is

The endpoint in our case is db-9bq15.471ed518-8524-4f37-a3b2-136c68ed3aa6.user-ccx.mydbservice.net. - Get the endpoint of the RDS Writer. In this example, the endpoint is

database-1.cluster-cqc4xehkpymd.eu-north-1.rds.amazonaws.com - Update the Security group on AWS RDS to allow the IP address of the CCX Primary to connect. To get the IP address of the CCX Primary, run:

dig db-9bq15.471ed518-8524-4f37-a3b2-136c68ed3aa6.user-ccx.mydbservice.net - Ensure you can connect a MySQL client to both the CCX Primary and the RDS Writer.

Create a Replication User On the RDS Writer Instance

Create a replication user with sufficient privileges on the RDS Writer.

In the steps below, we will use repl and replpassword as the credentials when setting up the replica on CCX.

CREATE USER 'repl'@'%' IDENTIFIED BY 'replpassword';

GRANT REPLICATION SLAVE ON *.* TO 'repluser'@'%'; #mysql 8.0

GRANT REPLICATION REPLICATION_SLAVE_ADMIN ON *.* TO 'repluser'@'%';

Block Writes to the RDS Writer Instance

This is the most challenging part. You must ensure your applications cannot write to the Writer instance.

Unfortunately, AWS RDS blocks operations like FLUSH TABLES WITH READ LOCK.

Create a Consistent Dump

Assuming that writes are now blocked on the RDS Writer Instance, you must get the binary log file and the position of the RDS Writer instance.

Get the Replication Start Position

The start position (binary log file name and position) is used to tell the replica where to start replicating data from.

MySQL 8.0: SHOW MASTER STATUS\G

MySQL 8.4 and later: SHOW BINARY LOG STATUS\G

It will output:

*************************** 1. row ***************************

File: mysql-bin-changelog.000901

Position: 584

Binlog_Do_DB:

Binlog_Ignore_DB:

Executed_Gtid_Set: 796aacf3-24ed-11f0-949d-0605a27ab4b9:1-876

1 row in set (0.02 sec)

Record the File: mysql-bin-changelog.000901 and the Position: 584 as they will be used to set up replication.

Create the mysqldump

Be sure to specify the database you wish to replicate. You must omit any system databases. In this example, we will dump the databases prod and crm.

mysqldump -uadmin -p -hdatabase-1.cluster-cqc4xehkpymd.eu-north-1.rds.amazonaws.com --databases prod crm --triggers --routines --events --set-gtid_purged=OFF --single-transaction > dump.sql

Wait for it to complete.

Unblock Writes to the RDS Writer Instance

At this stage, it is safe to enable application writes again.

Load the Dump On the Replica

Create a Replication Filter On the Replica

The replica filter prevents corruption of the datastore, and we are not interested in changes logged by AWS RDS to mysql.rds* tables anyway. Also add other databases that you do not wish to replicate to the filter.

note:

If the CCX datastore’s system tables are corrupted using replication, then the datastore must be recreated.

CHANGE REPLICATION FILTER REPLICATE_IGNORE_DB=(mysql, sys, performance_schema);

Important! If your database dump contains stored procedures, triggers, or events, then you must replace DEFINER:

sed 's/\sDEFINER=`[^`]*`@`[^`]*`//g' -i dump.sql

Apply the Dump File On the CCX Primary:

cat dump.sql | mysql -uccxadmin -p -hCCX_PRIMARY

Connect the CCX Primary to the RDS Writer Instance

The CCX Primary must be instructed to replicate from the RDS Writer. We have the binlog file and position from the earlier step:

- mysql-bin-changelog.000901

- 584

CHANGE REPLICATION SOURCE TO SOURCE_HOST='database-1.cluster-cqc4xehkpymd.eu-north-1.rds.amazonaws.com', SOURCE_PORT=3306, SOURCE_USER='repl', SOURCE_PASSWORD='replpassword', SOURCE_SSL=1, SOURCE_LOG_FILE='mysql-bin-changelog.000901', SOURCE_LOG_POS=584;

Start the Replica

On the replica, run:

START REPLICA;

followed by:

SHOW REPLICA STATUS;

And verify that:

Replica_IO_State: Waiting for source to send event

...

Replica_IO_Running: Yes

Replica_SQL_Running: Yes

When the Migration is Ready

At some point, you will need to point your applications to the new datastore. Ensure:

- Prevent writes to the RDS Writer

- Make sure the CCX Primary has applied all data (use

SHOW REPLICA STATUS) - Connect the applications to the new datastore

STOP REPLICA;

RESET REPLICA ALL;

CHANGE REPLICATION FILTER REPLICATE_IGNORE_DB=();

Troubleshooting

If the replication fails to start, verify:

- All the steps above have been followed

- Ensure that the IP address of the CCX Primary is added to the security group used by the RDS Writer instance

- Ensure that you have the correct IP/FQDN of the RDS Writer instance

- Ensure that users are created correctly and using the correct password

- Ensure that the dump is fresh

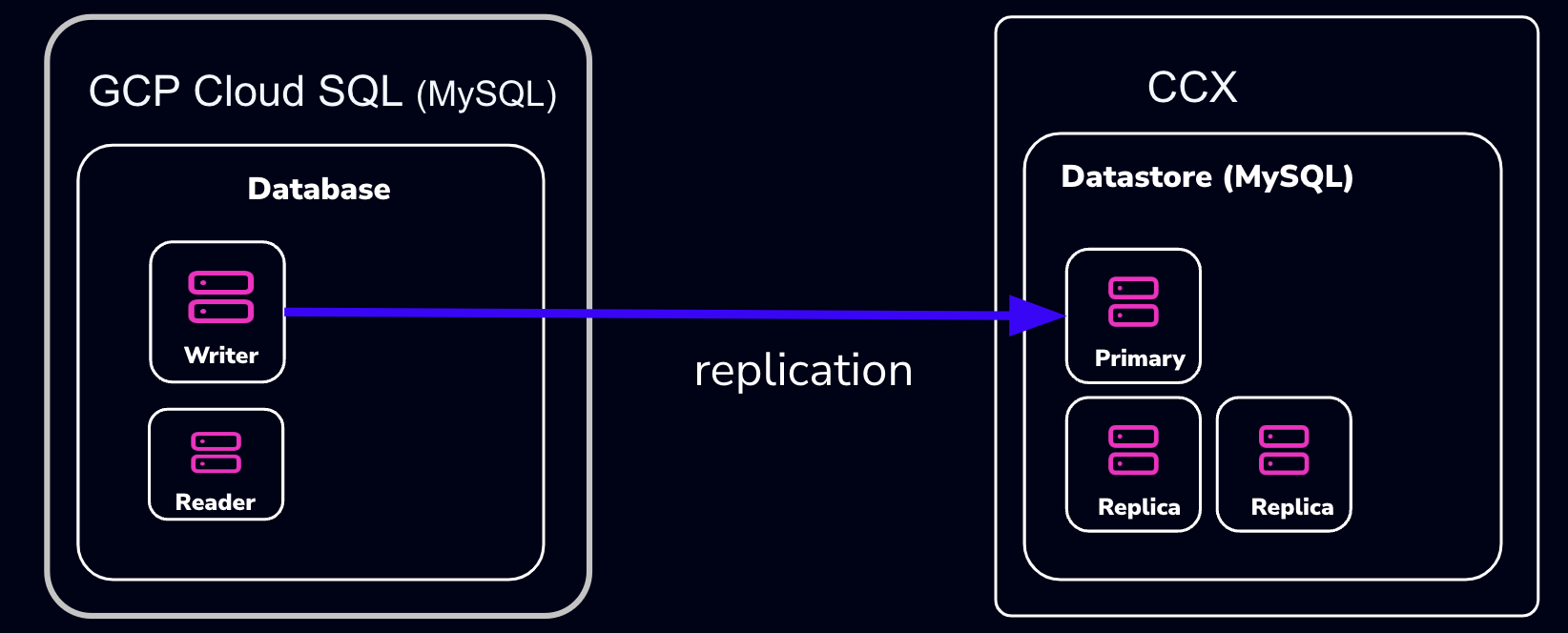

4.6.5.3.5 - Importing Data From GCP

This procedure describes how to import data from Google Cloud SQL to a MySQL datastore located in CCX.

- The MySQL Datastore on CCX is referred to as the ‘CCX Primary’

- The GCP Source of the data is referred to as the ‘GCP Primary’

Schematically, this is what we will set up:

note:

If you don’t want to set up replication, you can choose to only apply the following sections:

- Create a database dump file of the GCP Primary

- Apply the dump file on the CCX replica

Also, practice this a few times before you actually do the migration.

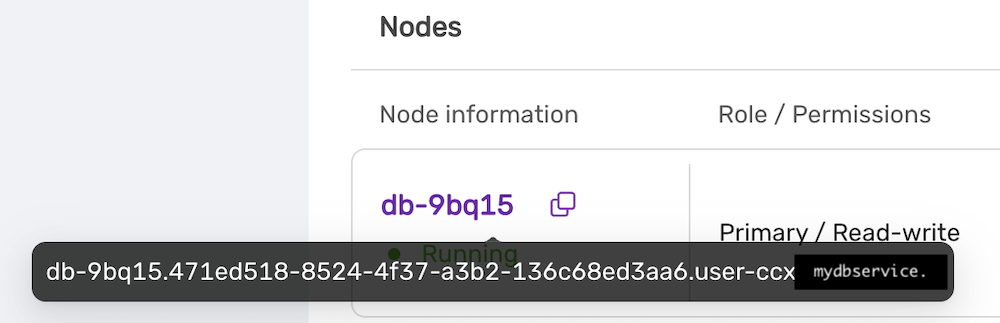

Preparations

- Create a datastore on CCX. Note that you can also replicate from MySQL 8.0 to MySQL 8.4.

- Get the endpoint of the CCX Primary (under the Nodes section).

The endpoint in our case is

The endpoint in our case is db-9bq15.471ed518-8524-4f37-a3b2-136c68ed3aa6.user-ccx.mydbservice.net. - The GCP Primary must have a Public IP.

- Get the endpoint of the GCP Primary. In this example, the endpoint is

34.51.xxx.xxx - Update the Security group on GCP to allow the IP address of the CCX Primary to connect. To get the IP address of the CCX Primary, run:

dig db-9bq15.471ed518-8524-4f37-a3b2-136c68ed3aa6.user-ccx.mydbservice.net - Ensure you can connect a MySQL client to both the CCX Primary and the GCP Primary.

Create a Replication User on the GCP Primary Instance

Create a replication user with sufficient privileges on the GCP Primary.

In the steps below, we will use repl and replpassword as the credentials when setting up the replica on CCX.

CREATE USER 'repl'@'%' IDENTIFIED BY 'replpassword';

GRANT REPLICATION SLAVE ON *.* TO 'repluser'@'%'; #mysql 8.0

GRANT REPLICATION REPLICATION_SLAVE_ADMIN ON *.* TO 'repluser'@'%';

Create the mysqldump

Be sure to specify the database you wish to replicate. You must omit any system databases. In this example, we will dump the databases prod and crm.

mysqldump -uroot -p -h34.51.xxx.xxx --databases prod crm --triggers --routines --events --set-gtid_purged=OFF --source-data --single-transaction > dump.sql

Wait for it to complete.

Load the Dump on the Replica

Create a Replication Filter on the Replica

The replica filter prevents corruption of the datastore, and we are not interested in changes logged by GCP to mysql.rds* tables anyway. Also add other databases that you do not wish to replicate to the filter.

note:

If the CCX datastore’s system tables are corrupted using replication, then the datastore must be recreated.

CHANGE REPLICATION FILTER REPLICATE_IGNORE_DB=(mysql, sys, performance_schema);

Important! If your database dump contains stored procedures, triggers, or events, then you must replace DEFINER:

sed 's/\sDEFINER=`[^`]*`@`[^`]*`//g' -i dump.sql

Apply the Dump File on the CCX Primary:

cat dump.sql | mysql -uccxadmin -p -hCCX_PRIMARY

Connect the CCX Primary to the GCP Primary

Issue the following commands on the CCX Primary:

CHANGE REPLICATION SOURCE TO SOURCE_HOST='34.51.xxx.xxx', SOURCE_PORT=3306, SOURCE_USER='repl', SOURCE_PASSWORD='replpassword', SOURCE_SSL=1;

Start the Replica

On the CCX Primary, run:

START REPLICA;

followed by:

SHOW REPLICA STATUS\G

And verify that:

Replica_IO_State: Waiting for source to send event

..

Replica_IO_Running: Yes

Replica_SQL_Running: Yes

When the Migration is Ready

At some point, you will need to point your applications to the new datastore. Ensure:

- There are no application writes to the GCP Primary

- The CCX Primary has applied all data (use

SHOW REPLICA STATUS \G, check theSeconds_Behind_Master) - Connect the applications to the new datastore

Then you can clean up the replication link on the CCX Primary:

STOP REPLICA;

RESET REPLICA ALL;

CHANGE REPLICATION FILTER REPLICATE_IGNORE_DB=();

Troubleshooting

If the replication fails to start, verify:

- All the steps above have been followed

- Ensure that the IP address of the CCX Primary is added to the security group used by the GCP Primary instance

- Ensure that you have the correct IP/FQDN of the GCP Primary instance

- Ensure that users are created correctly and using the correct password

- Ensure that the dump is fresh

4.6.5.3.6 - Limitations

Every product has limitations. Here is a list MySQL limitations:

Permissions

The privilege system in MySQL is offers more capabilties than MariaDB. Hence, the ‘ccxadmin’ user has more privileges in MySQL than in MariaDB.

The ‘ccxadmin’ user has the following privileges:

- Global / all databases (

*.*):- SELECT, INSERT, UPDATE, DELETE, CREATE, DROP, RELOAD, PROCESS, REFERENCES, INDEX, ALTER, SHOW DATABASES, CREATE TEMPORARY TABLES, LOCK TABLES, EXECUTE, REPLICATION SLAVE, REPLICATION CLIENT, CREATE VIEW, REPLICATION_SLAVE_ADMIN, SHOW VIEW, CREATE ROUTINE, ALTER ROUTINE, CREATE USER, EVENT, TRIGGER, GRANT

This means that the ‘ccxadmin’ may assign privileges to users on all databases.

Restrictions:

‘ccxadmin’ is not allowed to modify the following databases

mysql.*sys.*

For those database, the following privileges have been revoked from ‘ccxadmin’:

- INSERT, UPDATE, DELETE, CREATE, DROP, REFERENCES, INDEX, ALTER, CREATE TEMPORARY TABLES, LOCK TABLES, EXECUTE, CREATE VIEW, SHOW VIEW, CREATE ROUTINE, ALTER ROUTINE, EVENT, TRIGGER

4.6.5.3.7 - Overview

CCX supports two types of MySQL clustering:

- MySQL Replication (Primary-replica configuration)

- Percona XtraDb Cluster (Multi-primary configuration)

For general purpose applications we recommend using MySQL Replication, and we only recommend to use Percona XtraDb Cluster if you are migrating from an existing application that uses Percona XtraDb Cluster.

If you are new to Percona XtraDb Cluster we stronly recommend to read about the Percona XtraDb Cluster limitations and Percona XtraDb Cluster Overview to understand if your application can benefit from Percona XtraDb Cluster.

MySQL Replication uses the standard asynchronous replication based on GTIDs.

Scaling

Storage and nodes can be scaled online.

Nodes (horizonal)

- The maximum number of database nodes in a datastore is 5.

- Multi-primary configuration must contain an odd number of nodes (1, 3 and 5).

Nodes (vertical)

A node cannot be scaled vertically currently. To scale to large instance type, then a larger instance must be added and then remove the unwanted smaller instances.

Storage

- Maximum size depends on the service provider and instance size

- Volume type cannot currently be changed

Further Reading

4.6.5.3.8 - Restore

There are two options to restore a backup:

- Restore a backup on the existing datastore

- Restore a backup on a new datastore

Please note that restoring a backup may be a long running process.

This option allows to restore a backup with point in time recovery. The WAL logs are replayed until the desired PITR. Warning! Running several restores may change the timelines.

This option allows to restore a backup on a new datastore. This option does not currently support PITR.

4.6.5.3.9 - TLS Connection

SSL Modes

CCX currently supports connections to MySQL in two SSL modes:

-

REQUIRED: This mode requires an SSL connection. If a client attempts to connect without SSL, the server rejects the connection. -

VERIFY_CA: This mode requires an SSL connection and the server must verify the client’s certificate against the CA certificates that it has.

CA Certificate

The Certificate Authority (CA) certificate required for VERIFY_CA mode can be downloaded from your datastore on CCX using an API call or through the user interface on page https://{your_ccx_domain}/projects/default/data-stores/{datastore_id}/settings.

This certificate is used for the VERIFY_CA SSL mode.

Example Commands

Here are example commands for connecting to the MySQL server using the two supported SSL modes:

-

REQUIREDmode:mysql --ssl-mode=REQUIRED -u username -p -h hostname -

VERIFY_CAmode:mysql --ssl-mode=VERIFY_CA --ssl-ca=ca.pem -u username -p -h hostname

require_secure_transport

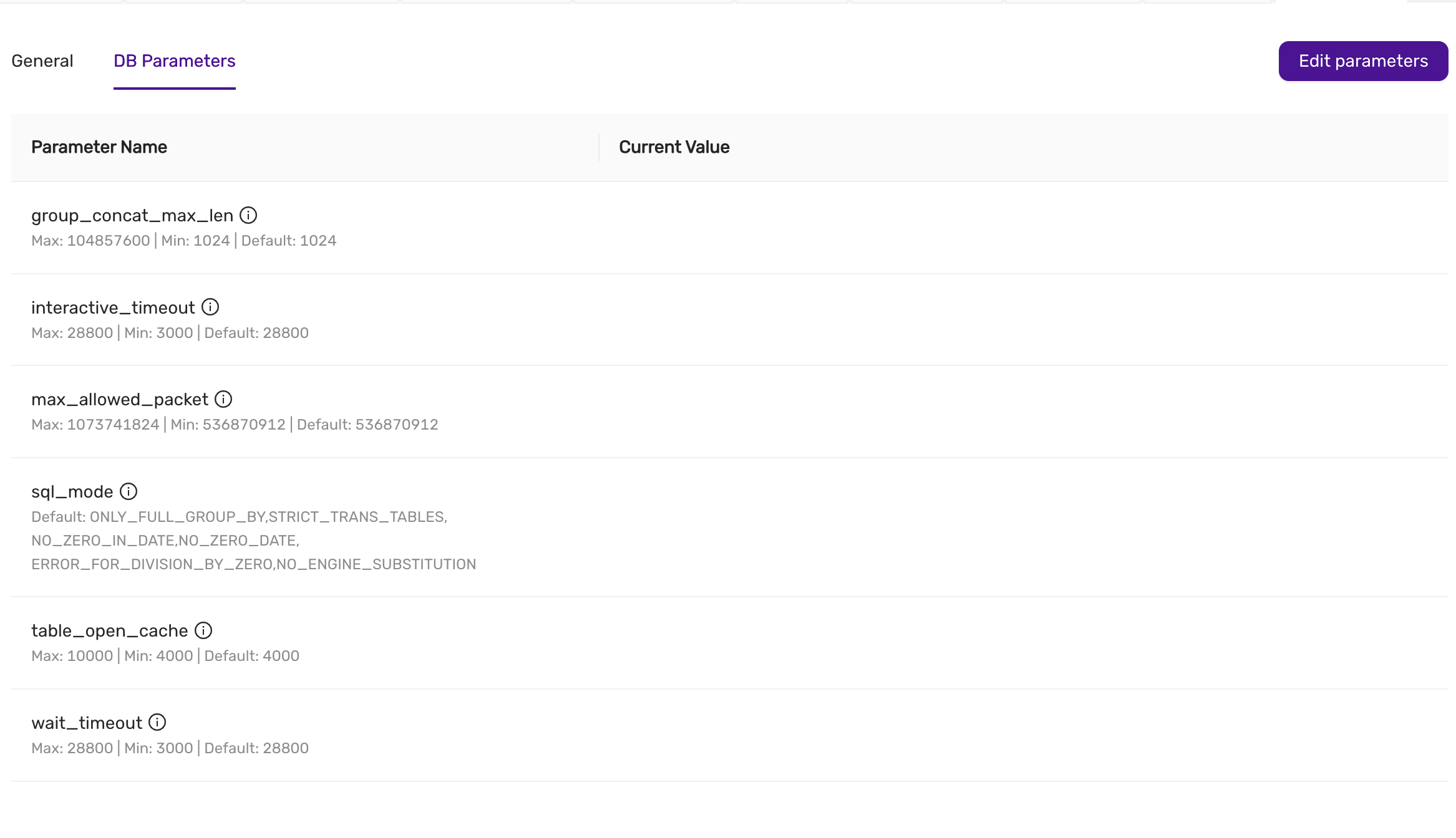

This is a MySQL setting that governs if connections to the datastore are required to use SSL. You can change this setting in CCX in Settings -> DB Parameters:

| Scenario | Server Parameter Settings | Description |

|---|---|---|

| Disable SSL enforcement | require_secure_transport = OFF |

This is the default to support legacy applications. If your legacy application doesn’t support encrypted connections, you can disable enforcement of encrypted connections by setting require_secure_transport=OFF. However, connections are encrypted unless SSL is disabled on the client. See examples |

| Enforce SSL | require_secure_transport = ON |

This is the recommended configuration. |

Examples

ssl-mode=DISABLED and require_secure_transport=OFF

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=disabled

...

mysql> \s

--------------

...

Connection id: 52

Current database: ccxdb

Current user: ccxadmin@...

*SSL: Not in use*

Current pager: stdout

...

ssl-mode=PREFERRED and require_secure_transport=OFF

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=preferred

...

mysql> \s

--------------

...

Connection id: 52

Current database: ccxdb

Current user: ccxadmin@...

SSL: Cipher in use is TLS_AES_256_GCM_SHA384

Current pager: stdout

...

ssl-mode=DISABLED and require_secure_transport=ON

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=disabled

mysql: [Warning] Using a password on the command line interface can be insecure.

ERROR 3159 (08004): Connections using insecure transport are prohibited while --require_secure_transport=ON.

ssl-mode=PREFERRED|REQUIRED and require_secure_transport=ON

mysql -uccxadmin -p -h... -P3306 ccxdb --ssl-mode=preferred|required

mysql> \s

--------------

...

Connection id: 52

Current database: ccxdb

Current user: ccxadmin@...

SSL: Cipher in use is TLS_AES_256_GCM_SHA384

Current pager: stdout

...

tls_version

The tls_version is set to the following by default:

| Variable_name | Value |

|---|---|

| tls_version | TLSv1.2,TLSv1.3 |

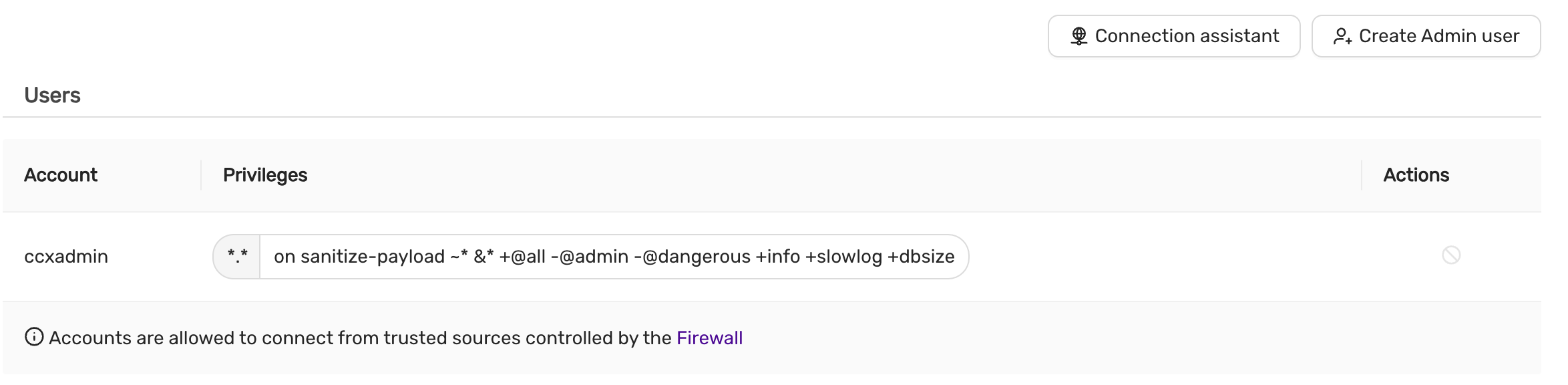

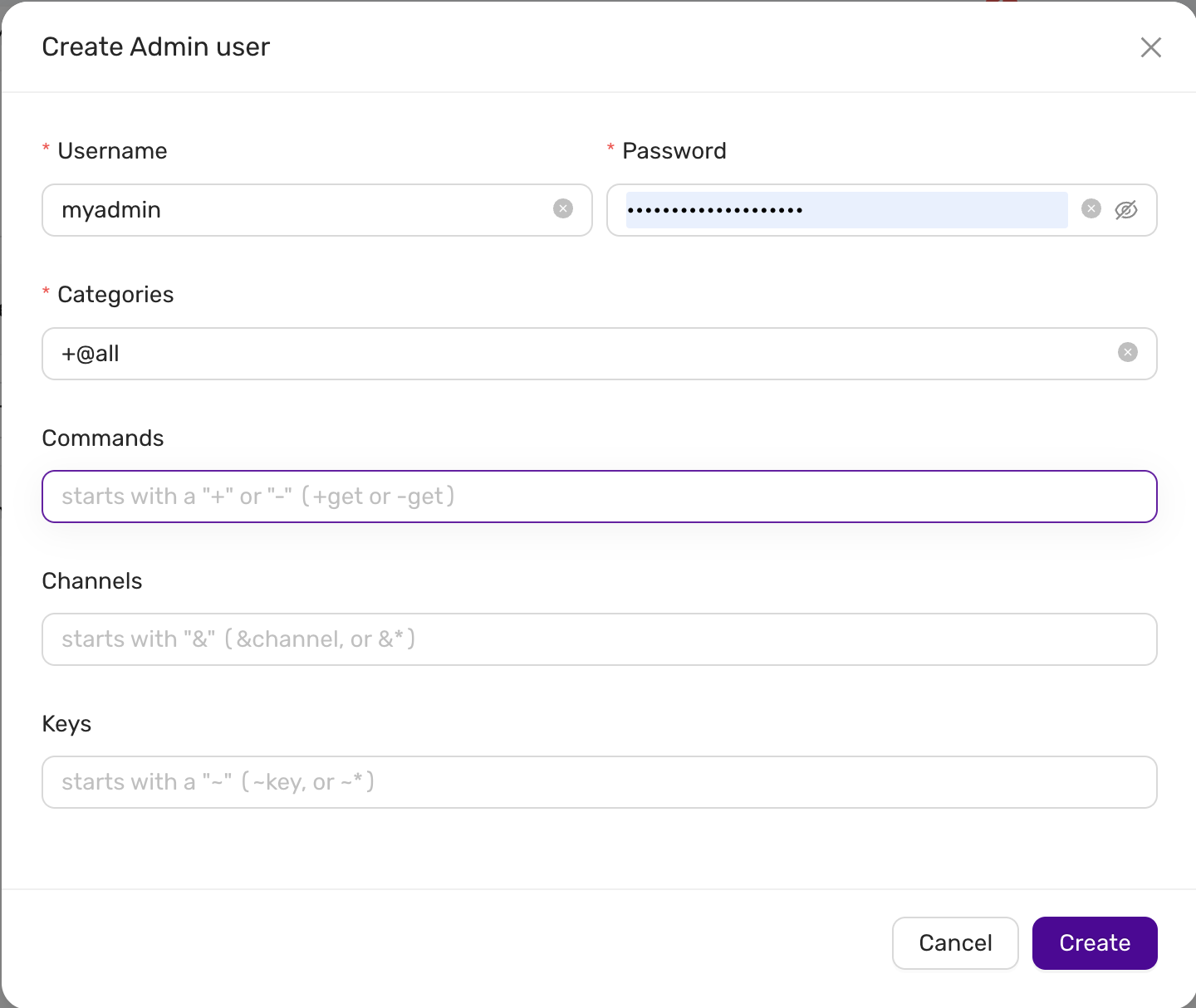

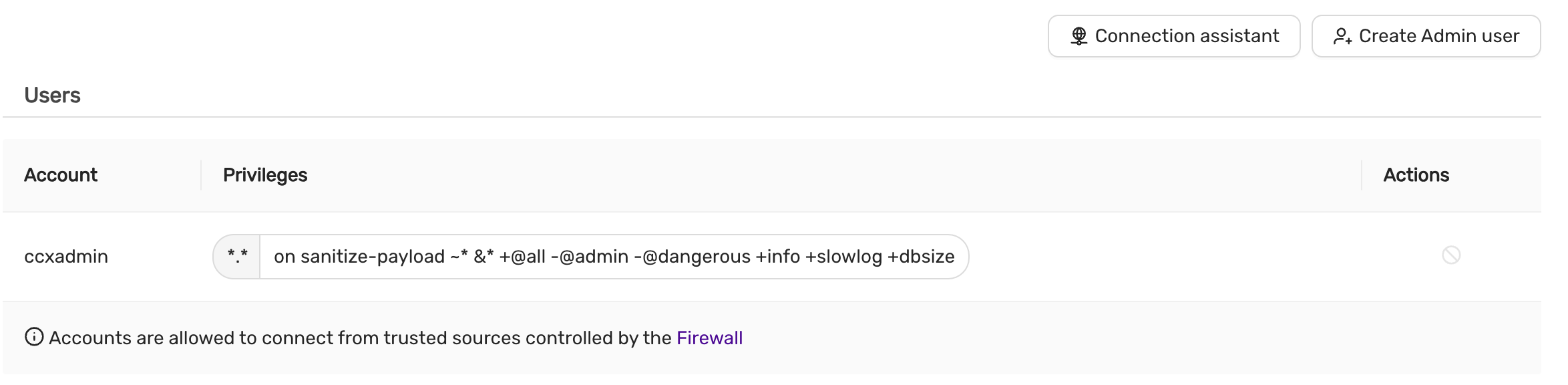

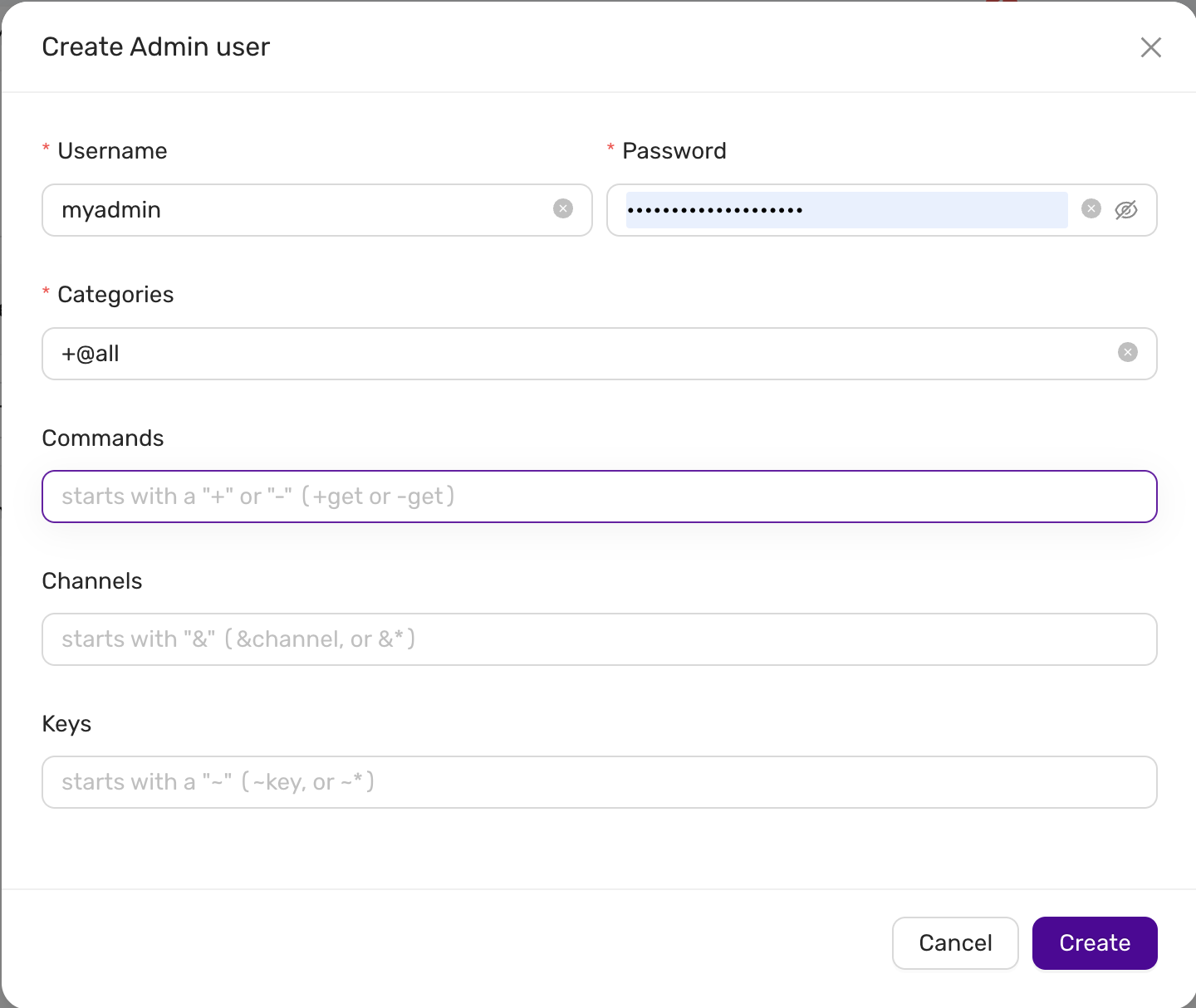

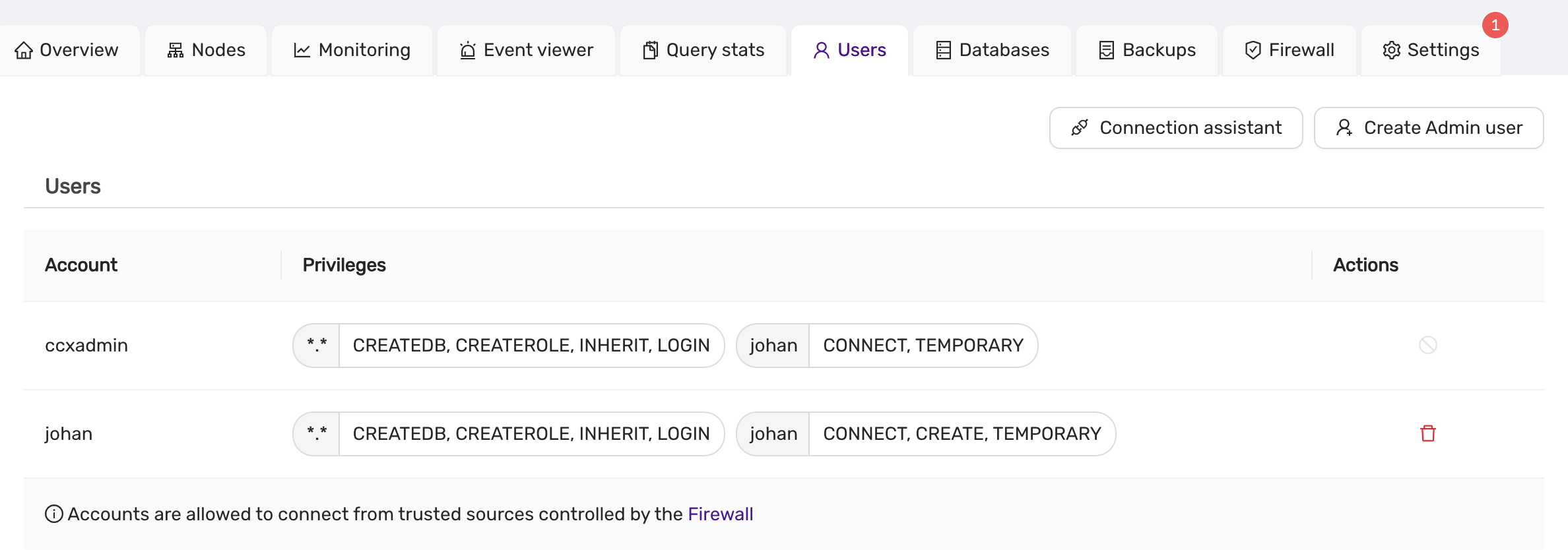

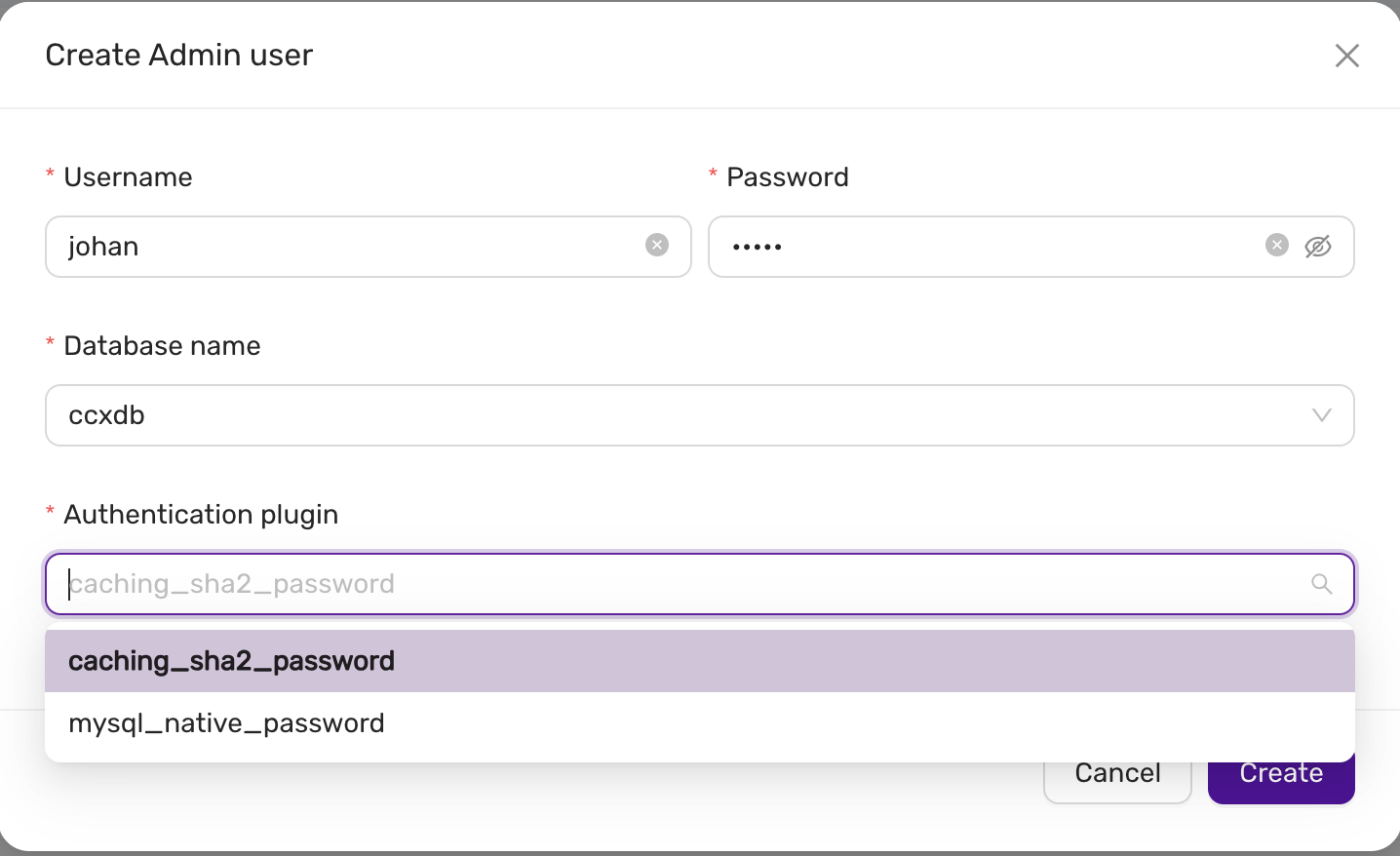

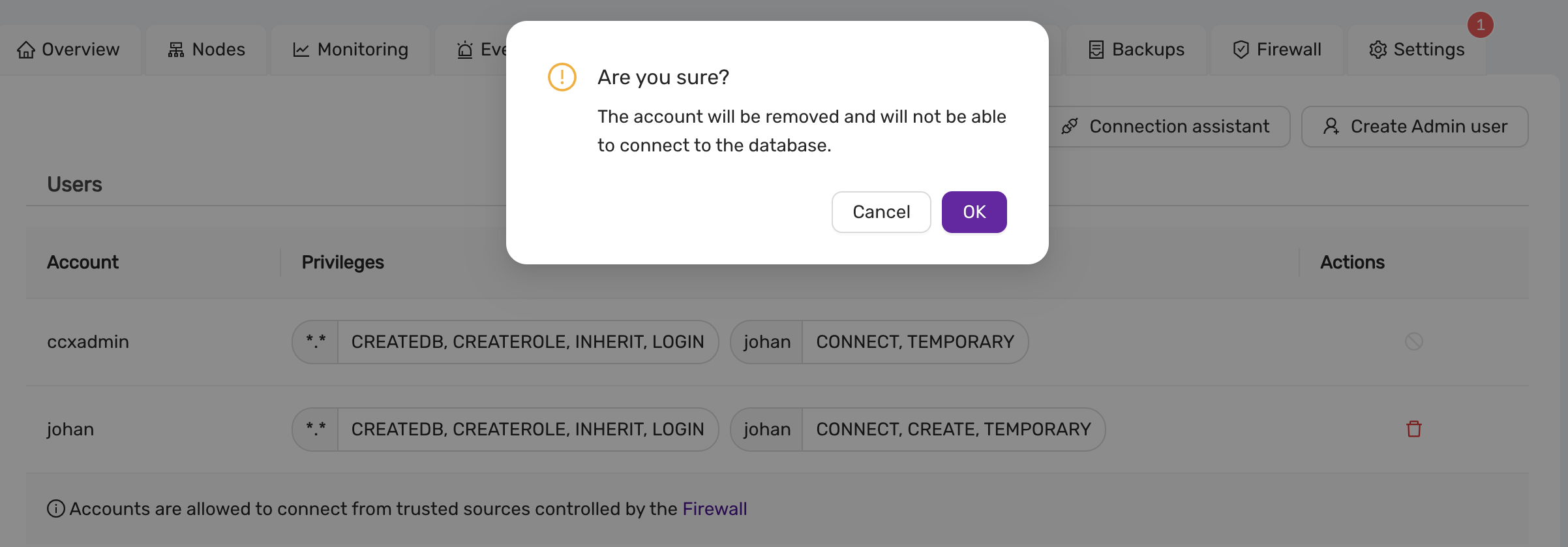

4.6.5.3.10 - User Management

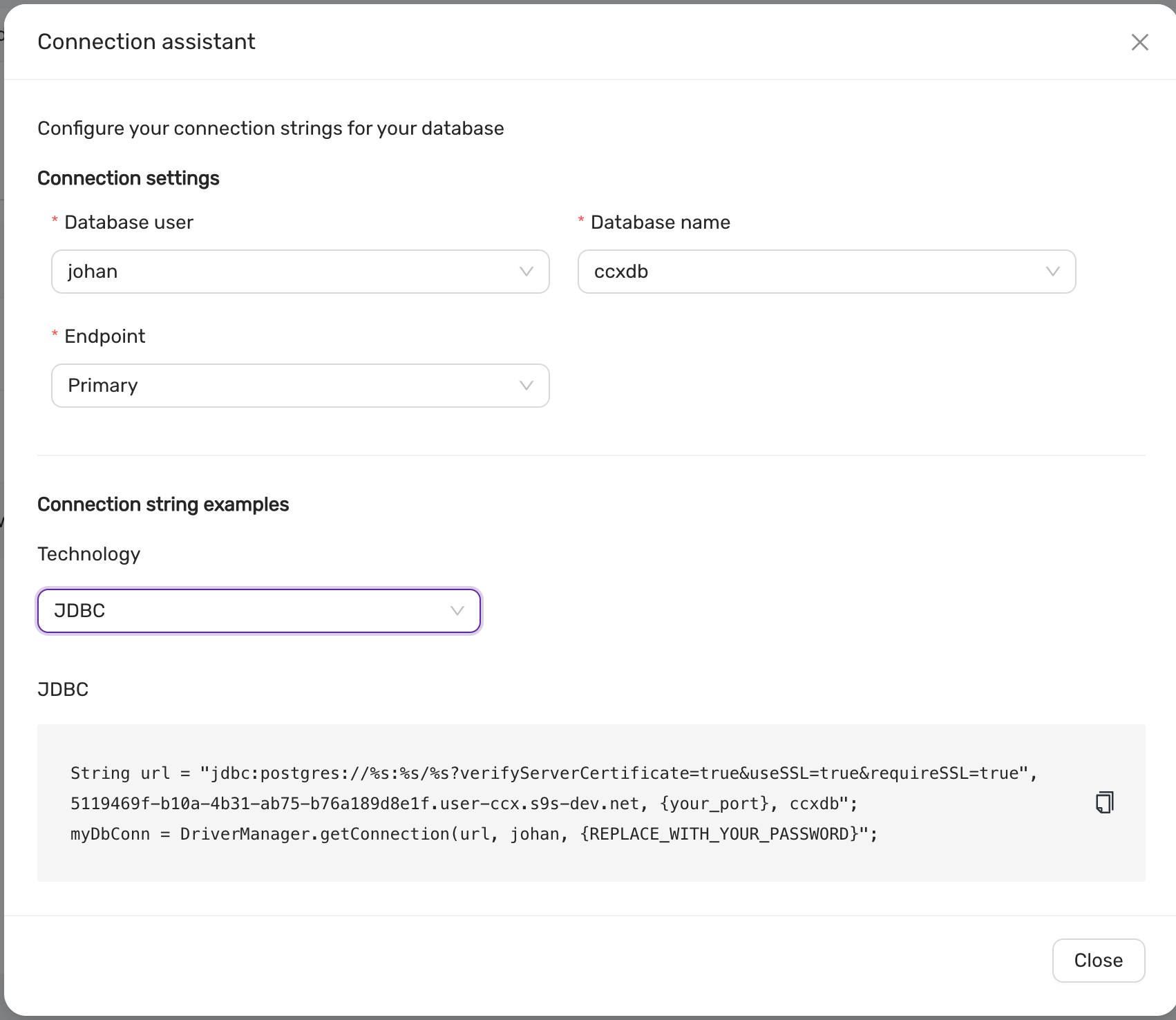

CCX supports creating database users from the web interface. The database user has the following privileges:

- Global / all databases (

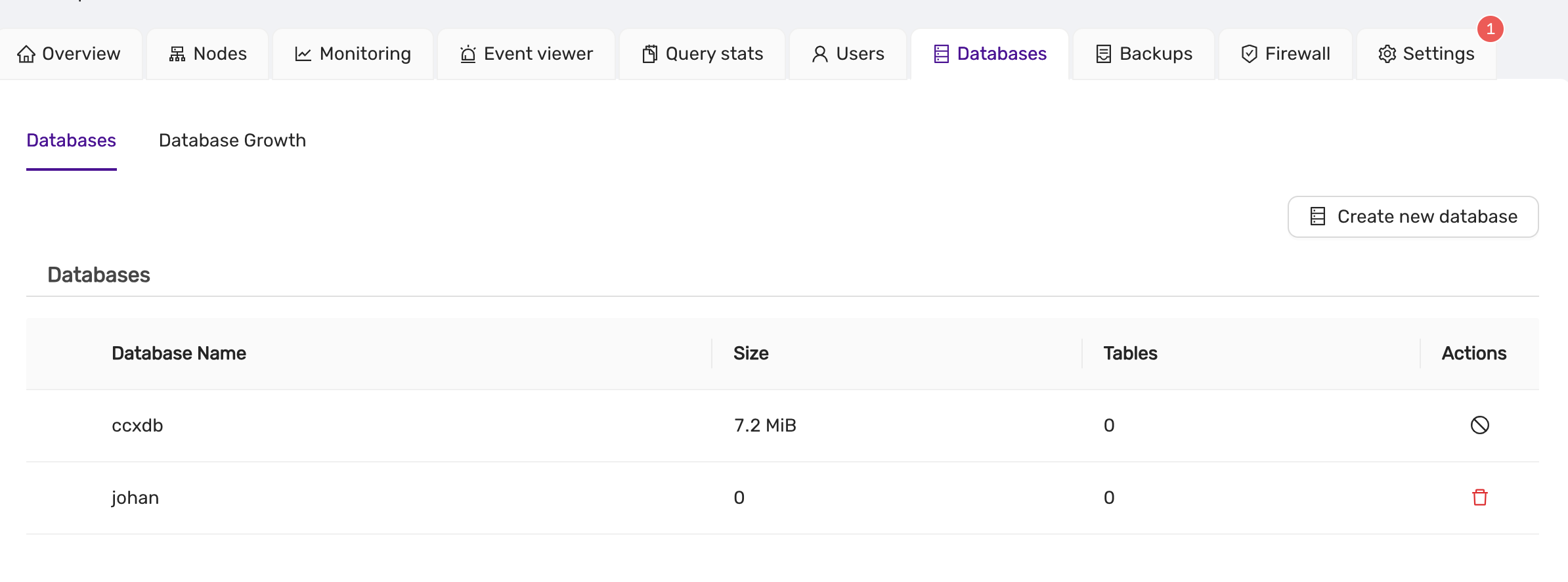

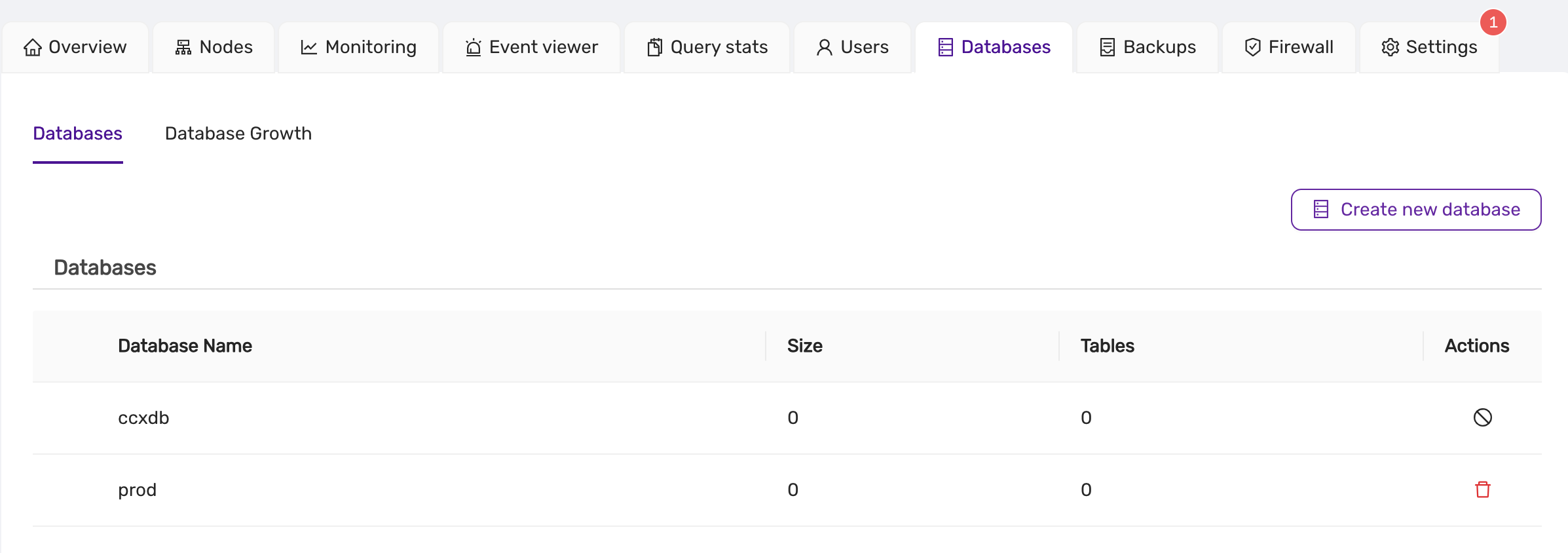

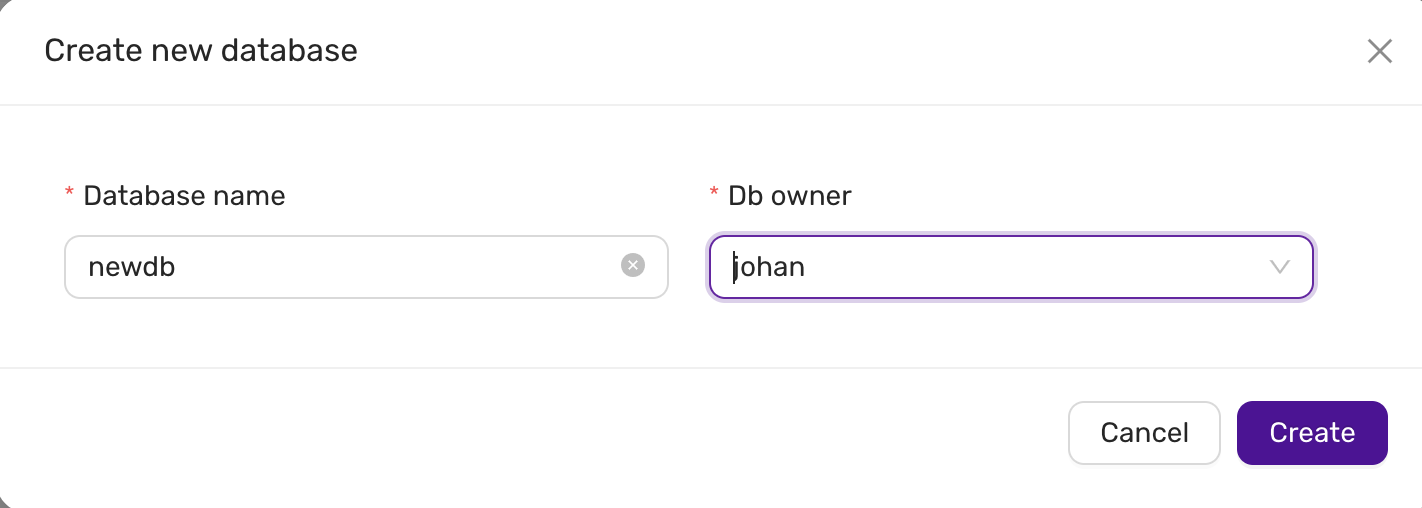

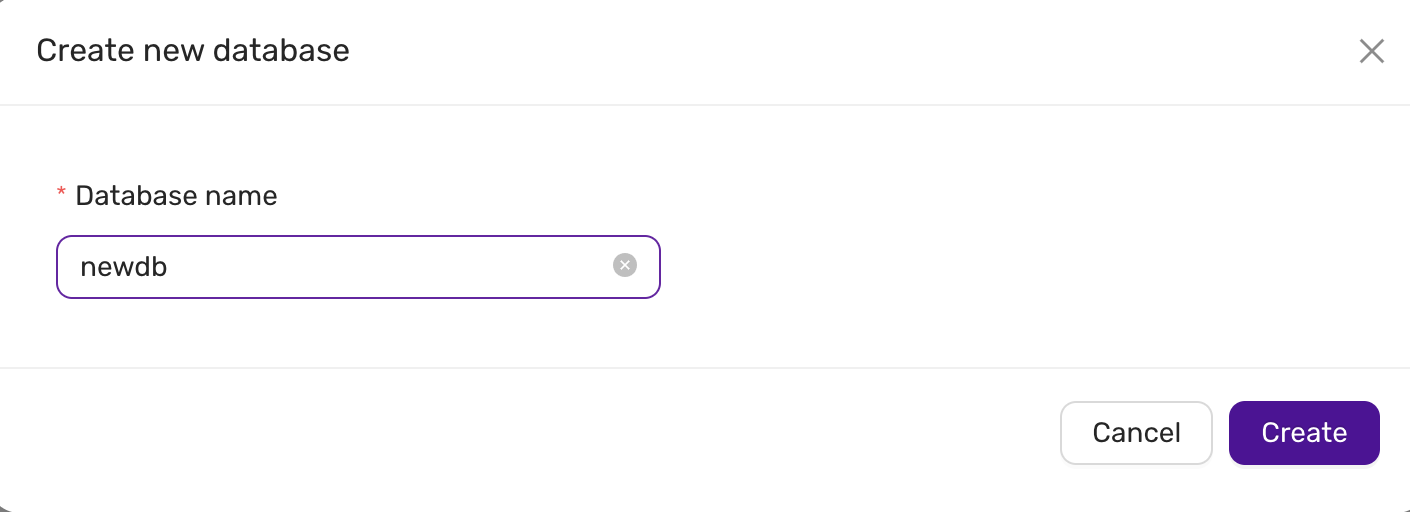

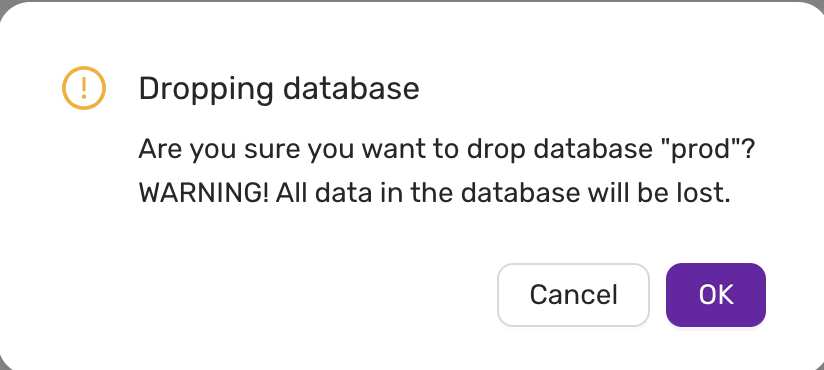

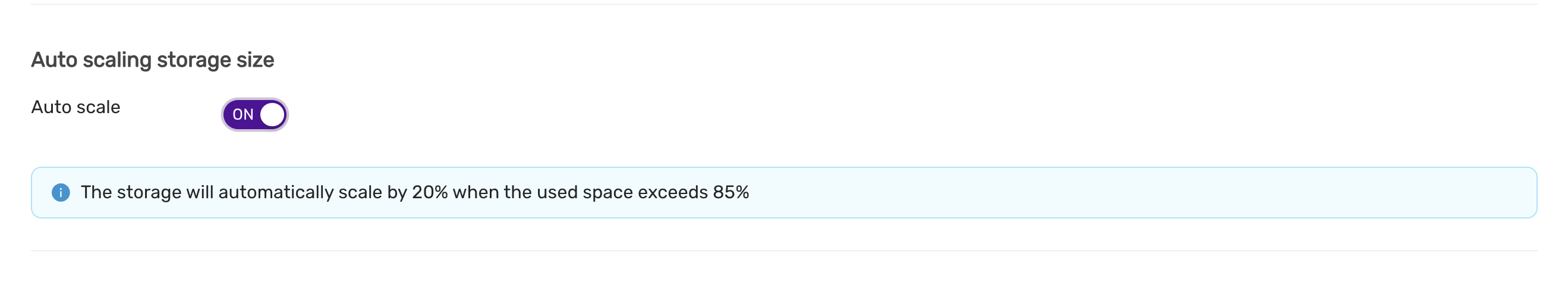

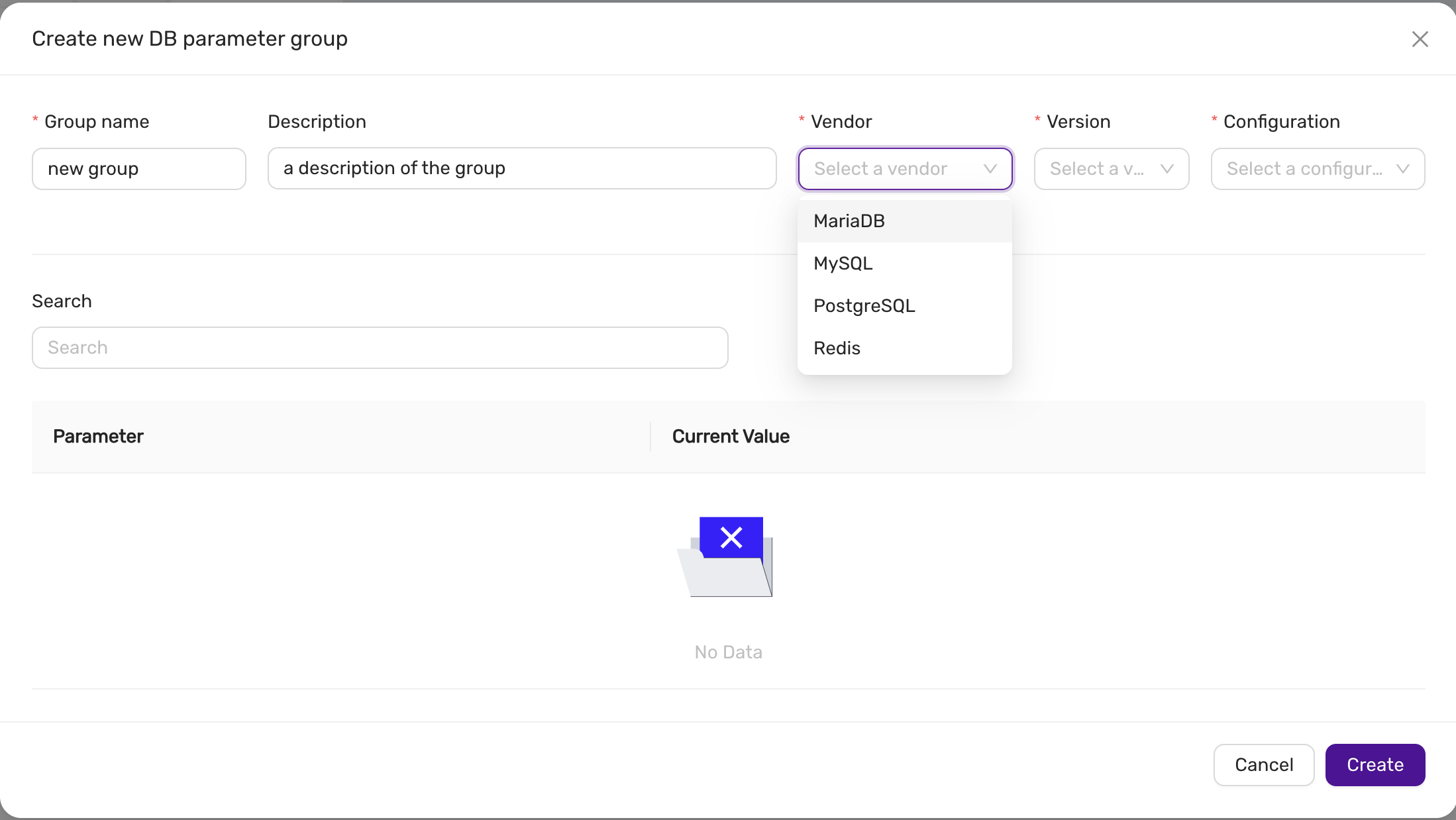

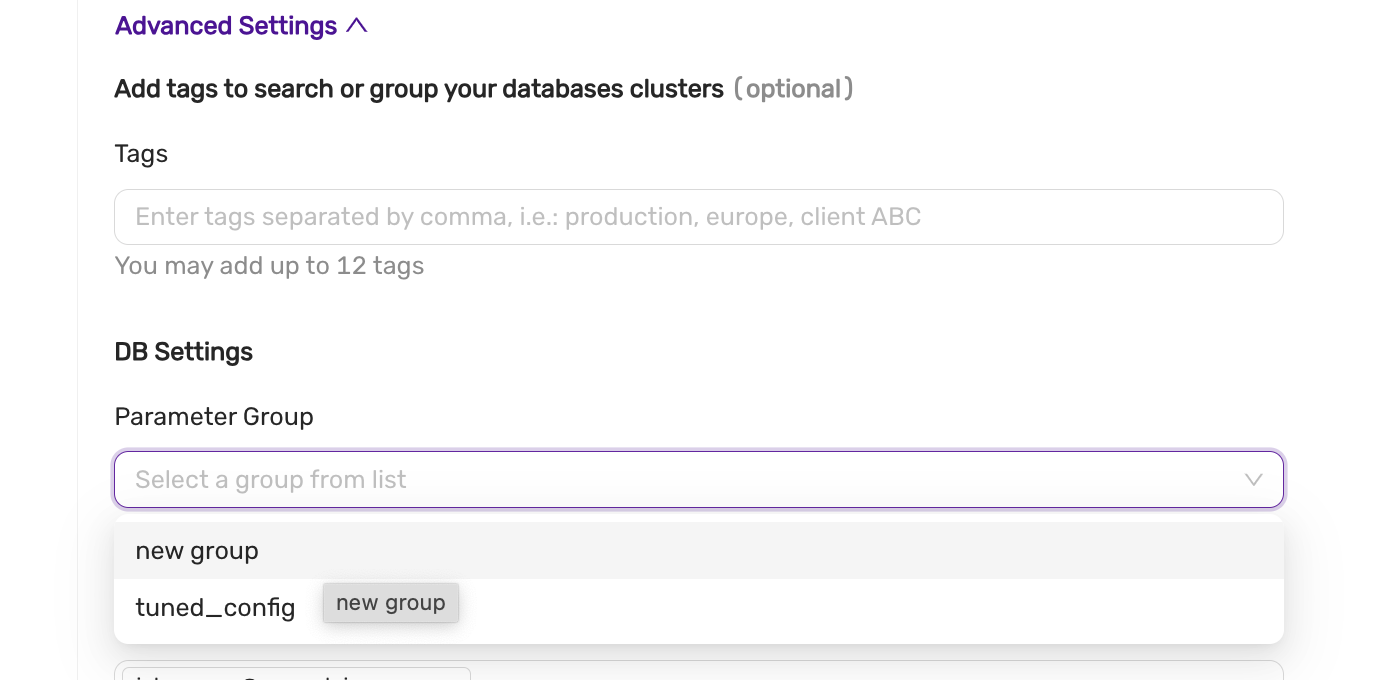

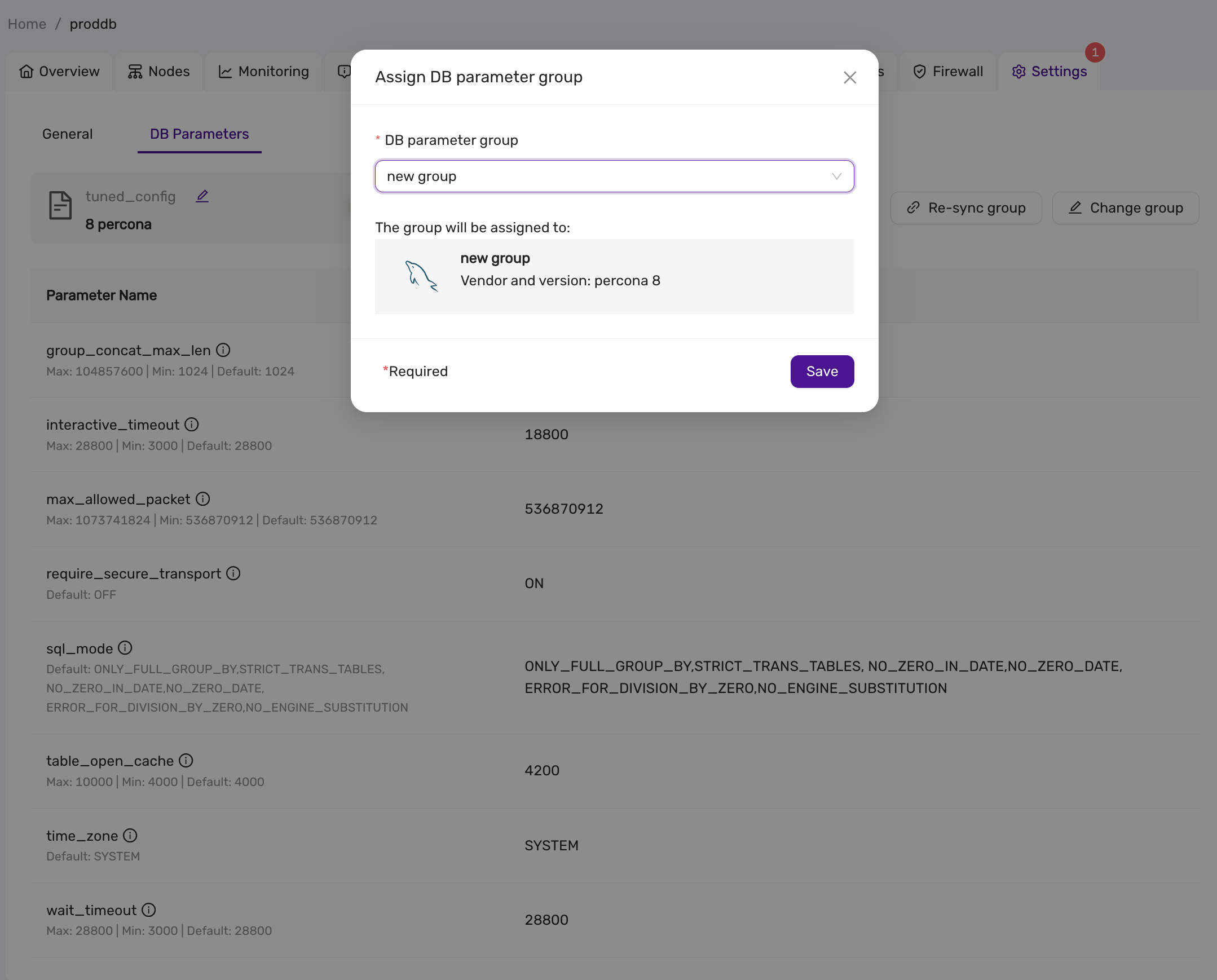

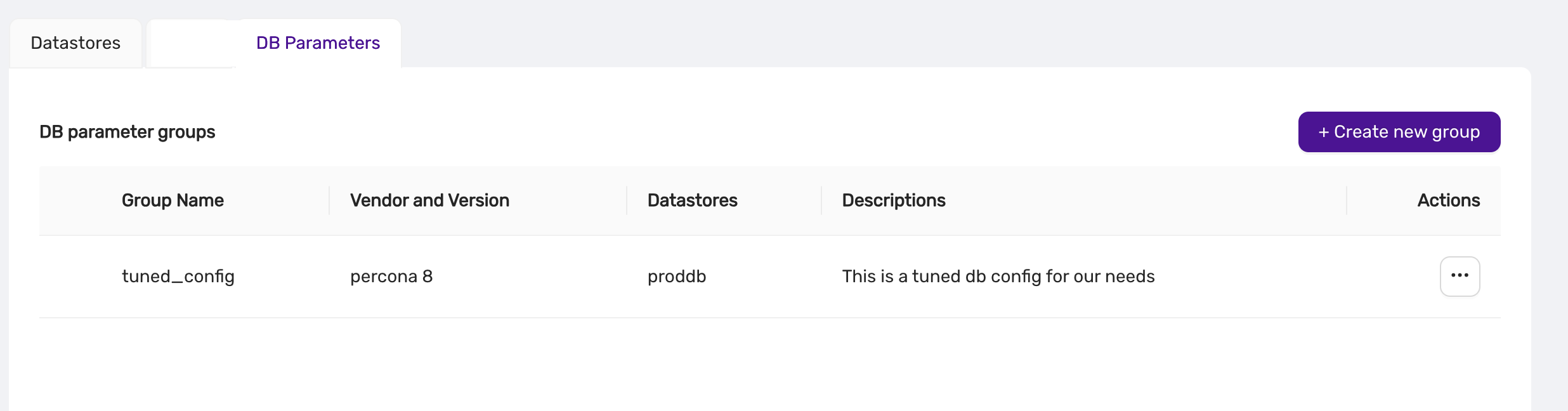

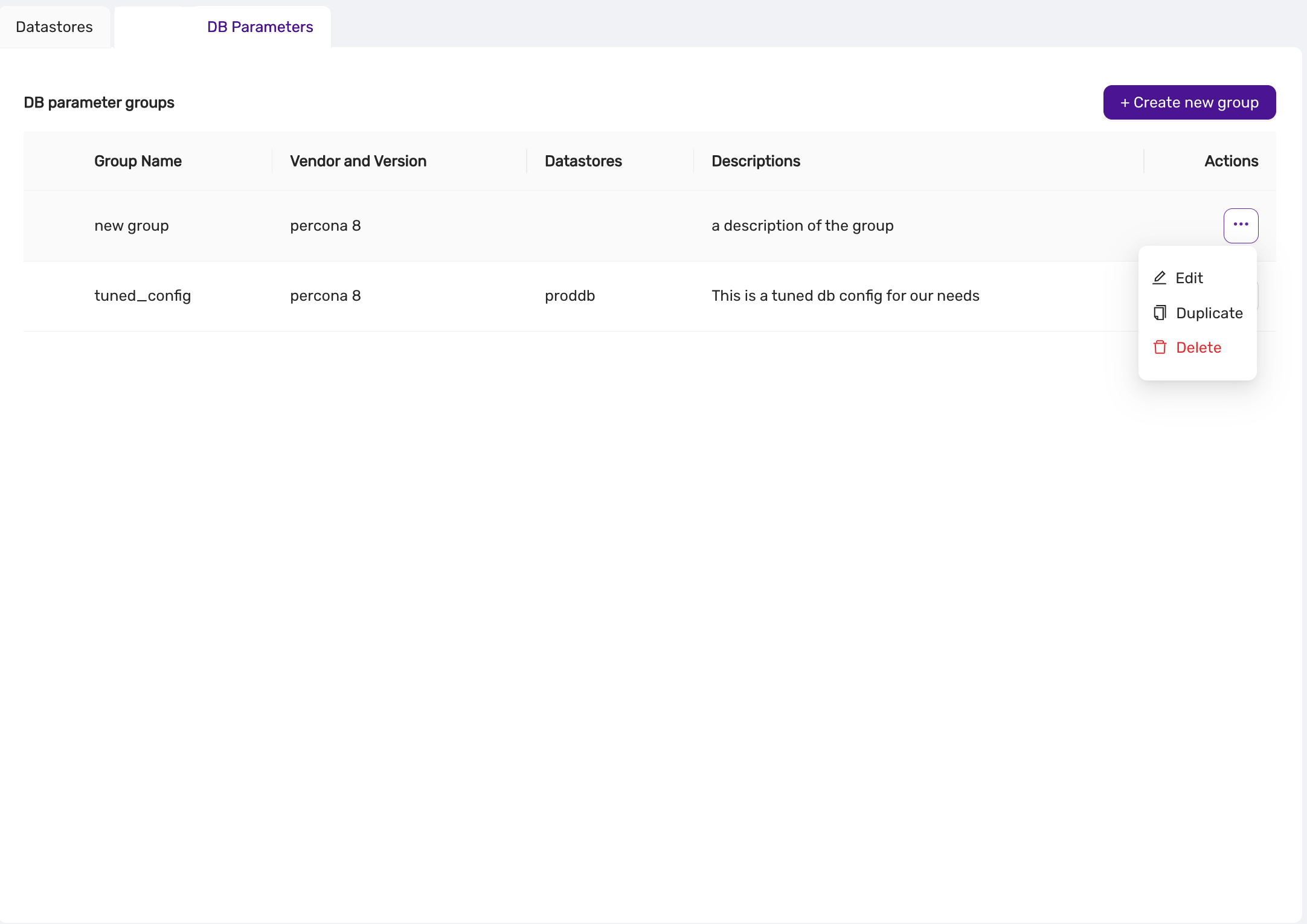

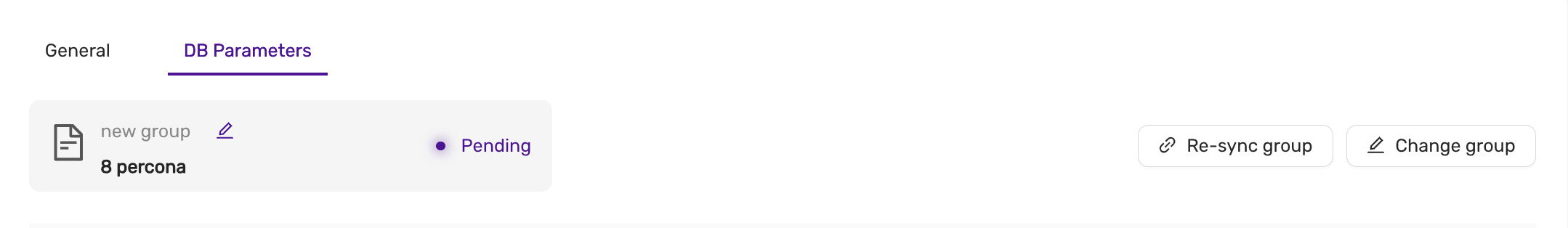

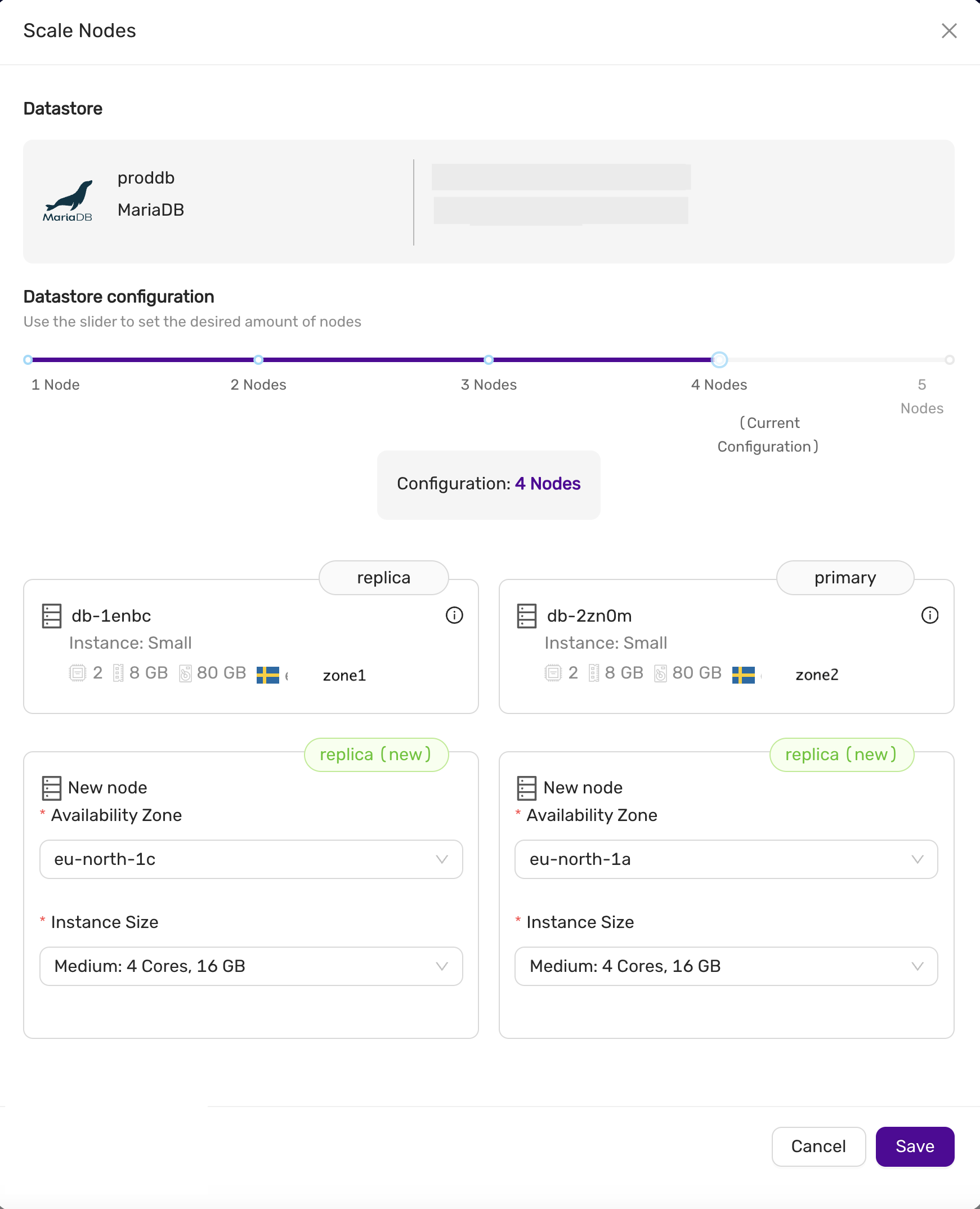

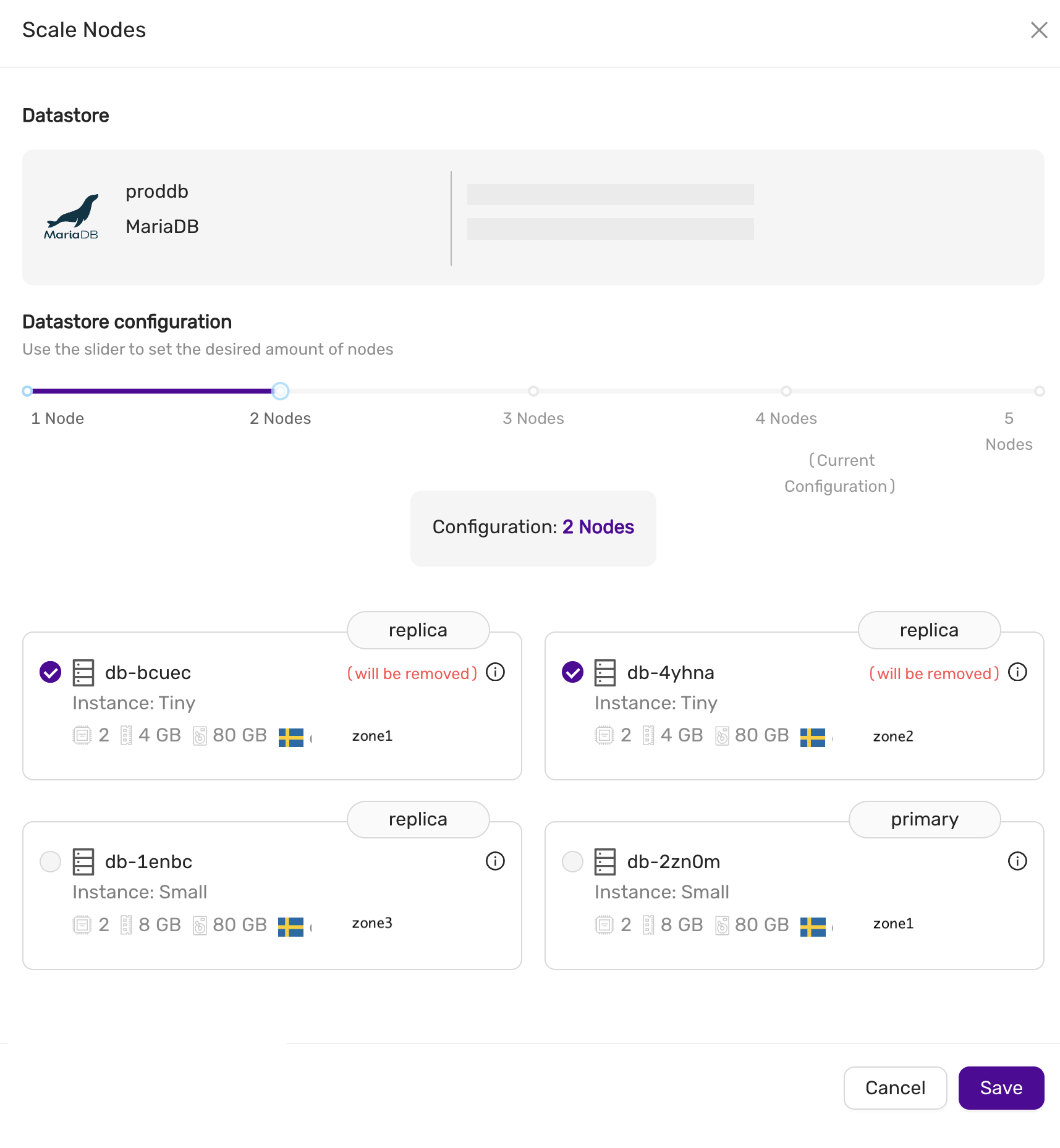

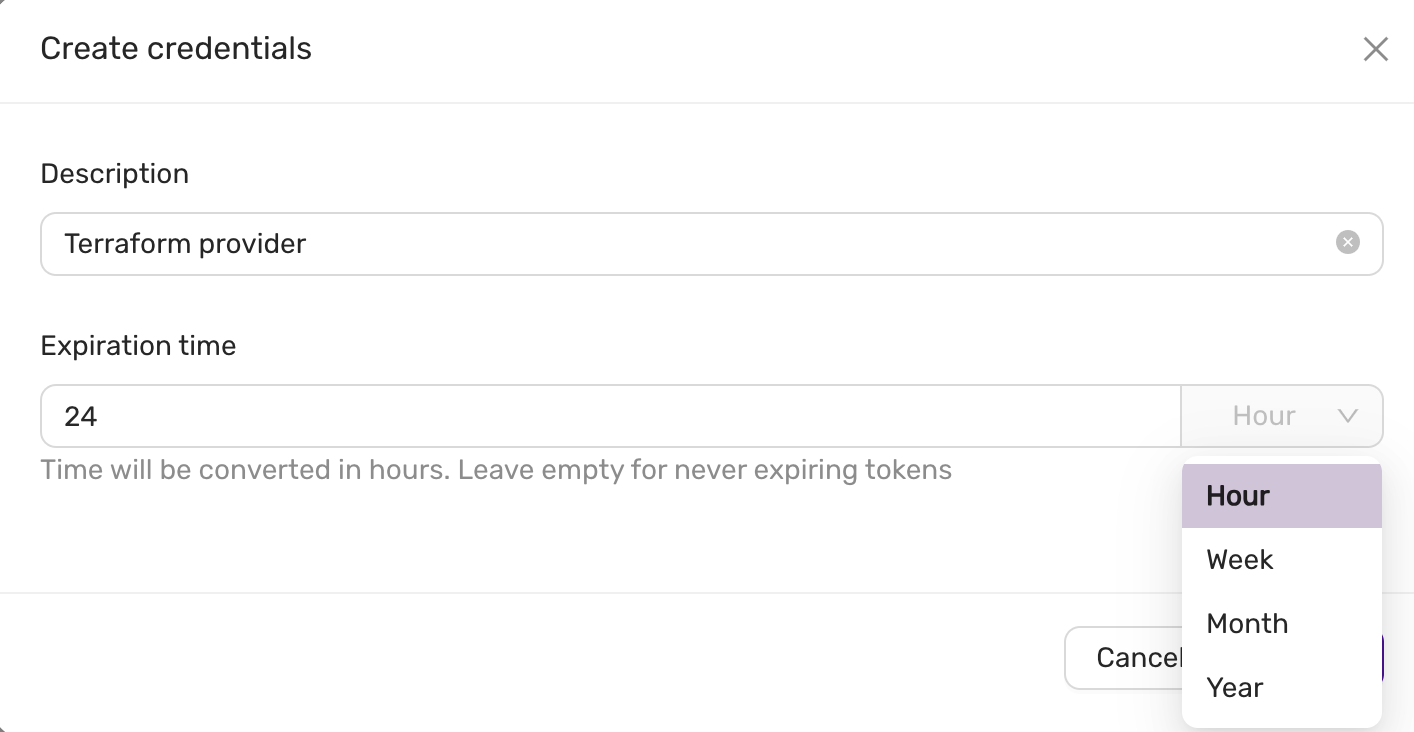

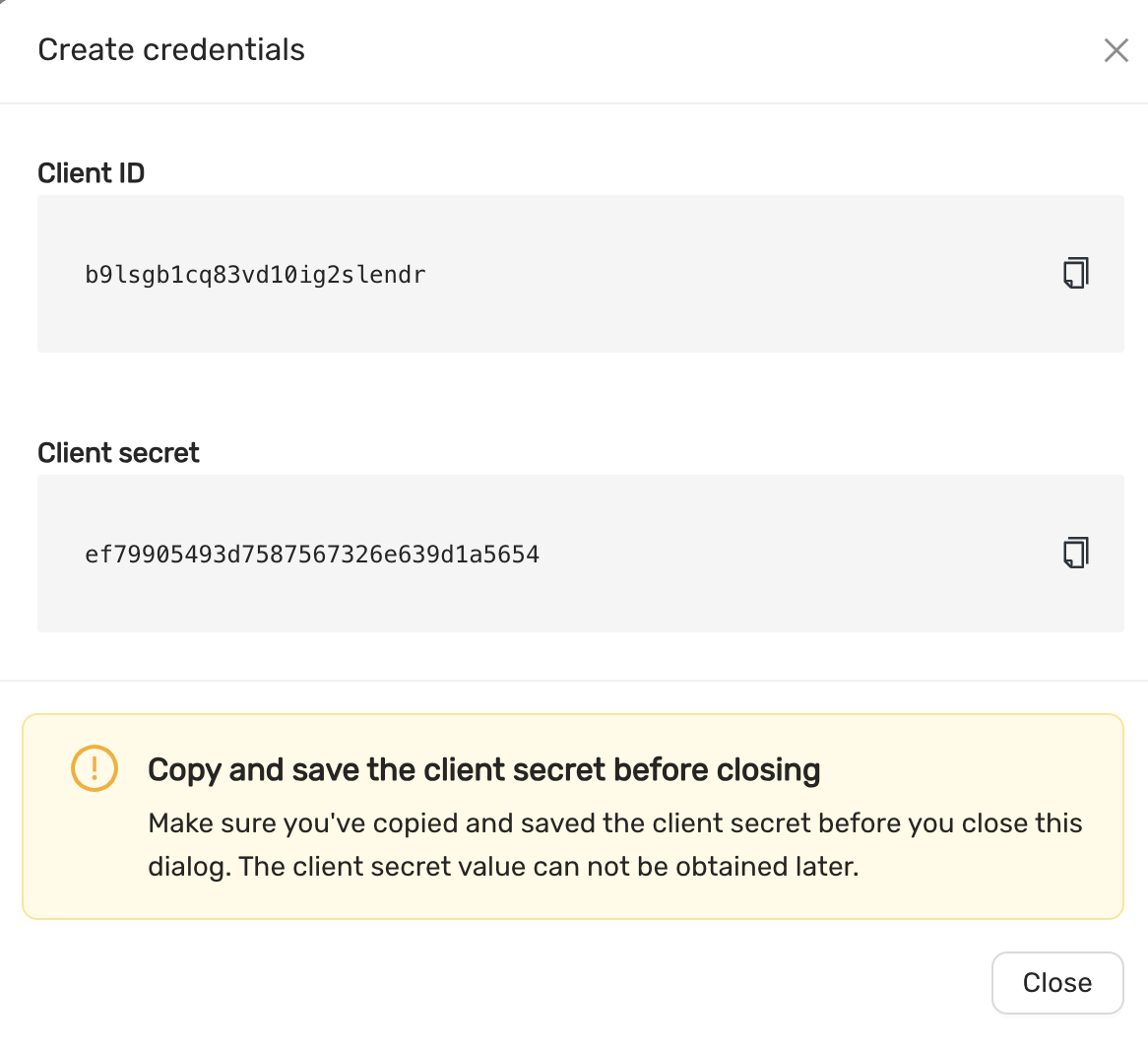

*.*):- SELECT, INSERT, UPDATE, DELETE, CREATE, DROP, RELOAD, PROCESS, REFERENCES, INDEX, ALTER, SHOW DATABASES, CREATE TEMPORARY TABLES, LOCK TABLES, EXECUTE, REPLICATION SLAVE, REPLICATION CLIENT, CREATE VIEW, REPLICATION_SLAVE_ADMIN, SHOW VIEW, CREATE ROUTINE, ALTER ROUTINE, CREATE USER, EVENT, TRIGGER, GRANT